The Cost of Always-On Agents is Less Than You Might Think

The OpenClaw era is here. And it's surprisingly affordable.

There’s a growing assumption in AI right now:

If agents are always running, costs will spiral.

This sounds reasonable. More autonomy should mean more tokens and more compute. More tokens and more compute should mean higher bills.

But that mental model is already breaking. Why? Because it assumes you’re paying for outputs—individual prompts and responses.

In reality, with new agentic systems like OpenClaw, you’re paying for something very different:

Ongoing throughput—work completed over time.

Once we understand that shift—the move from prompts and specific outputs to a model that focuses on ongoing throughput and persistent memory—the economics start to look completely different.

The Outdated Way to Think About Cost

Most teams still evaluate AI like an API:

Cost per token

Cost per request

Cost per response

That might work for chat, but it fails for agents. Agents don’t just respond once. Instead, they plan, break work into steps, execute across tools, revisit and improve outputs, and (if everything is working correctly) they continue operating after the initial trigger.

So the real question isn’t “how much does this prompt cost?” but “how much useful work can I get done for a small amount of money?”

What the Data Actually Shows

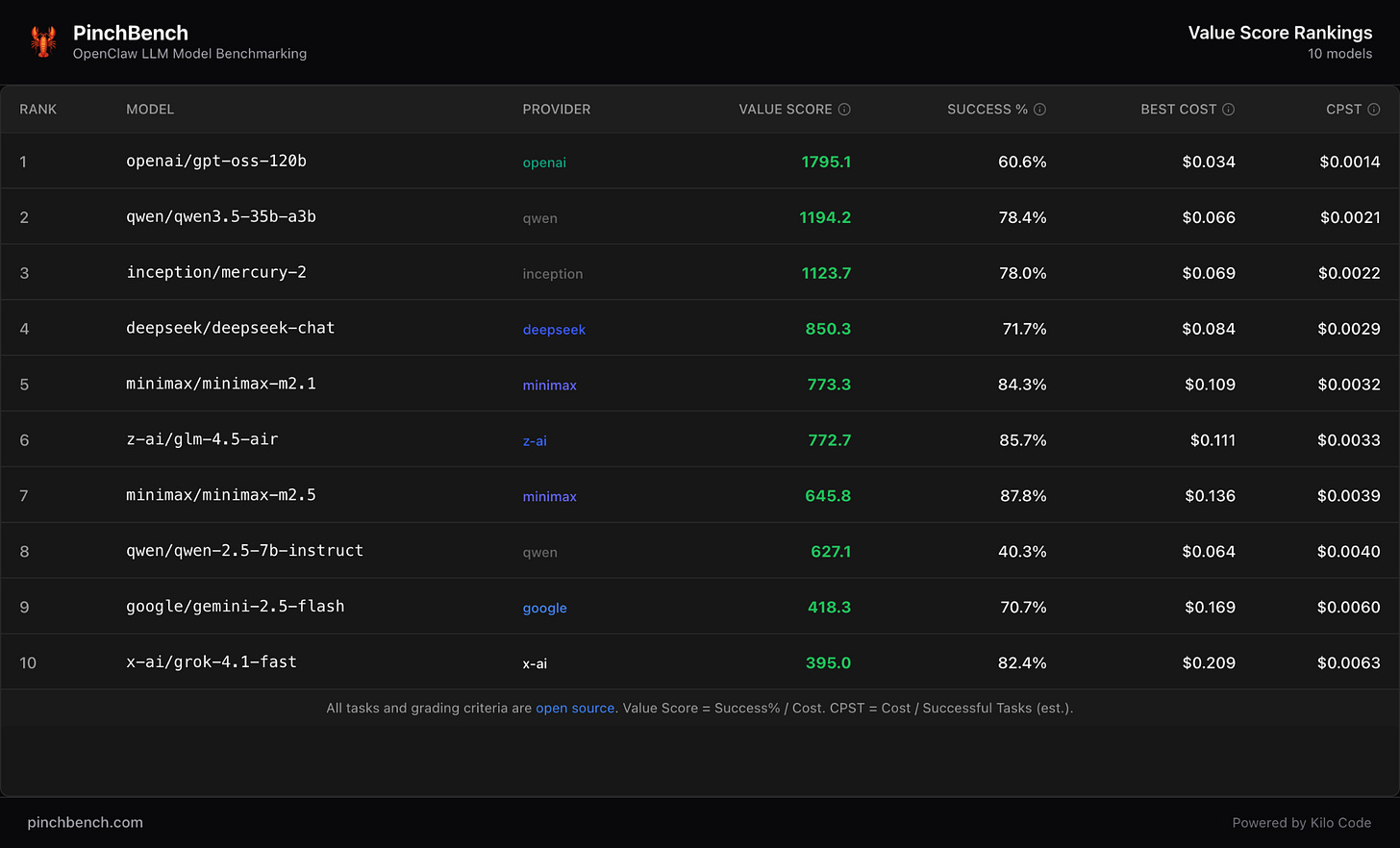

Benchmarks like PinchBench measure something more meaningful than tokens: cost per completed agent task.

Here’s a snapshot of current value rankings. A few things jump out immediately:

High-value models like Opus complete full tasks for $0.03–$0.13

Even strong mid-tier models like Kimi K2.5 stay well under $0.50 per task

Average success rates cluster surprisingly close (65–85%) despite major cost differences

This leads to a non-obvious conclusion:

You’re often paying 10–20x more for marginal gains in quality.

What $10 Gets You in OpenClaw

We often have free models like Nemotron 3 Super, Trinity Large Preview and MiMo-V2-Pro available in Kilo, but even if you’re opting for paid models, you can get a LOT for $10. A ten-spot will buy you a lot more than 10 turns in your agent chat.

Let’s translate those numbers into something real.

Without Agents: Linear Output

If you’re coding or prompting manually:

You rely on frontier models

You resend context every time

You manually trigger every step

Work stops when you stop

$10 gets you around 2–4 meaningful tasks. Then it’s on to the next project.

With KiloClaw: Compounding Output

With a hosted OpenClaw agent like KiloClaw, that same $10 is distributed across a system:

sub-agents handling different responsibilities

multiple model tiers with different costs

cached context reused across runs

scheduled execution instead of constant prompting

In KiloClaw, $10 gets you around 20–150+ agent task executions.

Of course there’s some variance depending on which tasks and skills you’re focused on. But still. This is huge. And it’s honestly a lot more than we were expecting when we started spinning up claws.

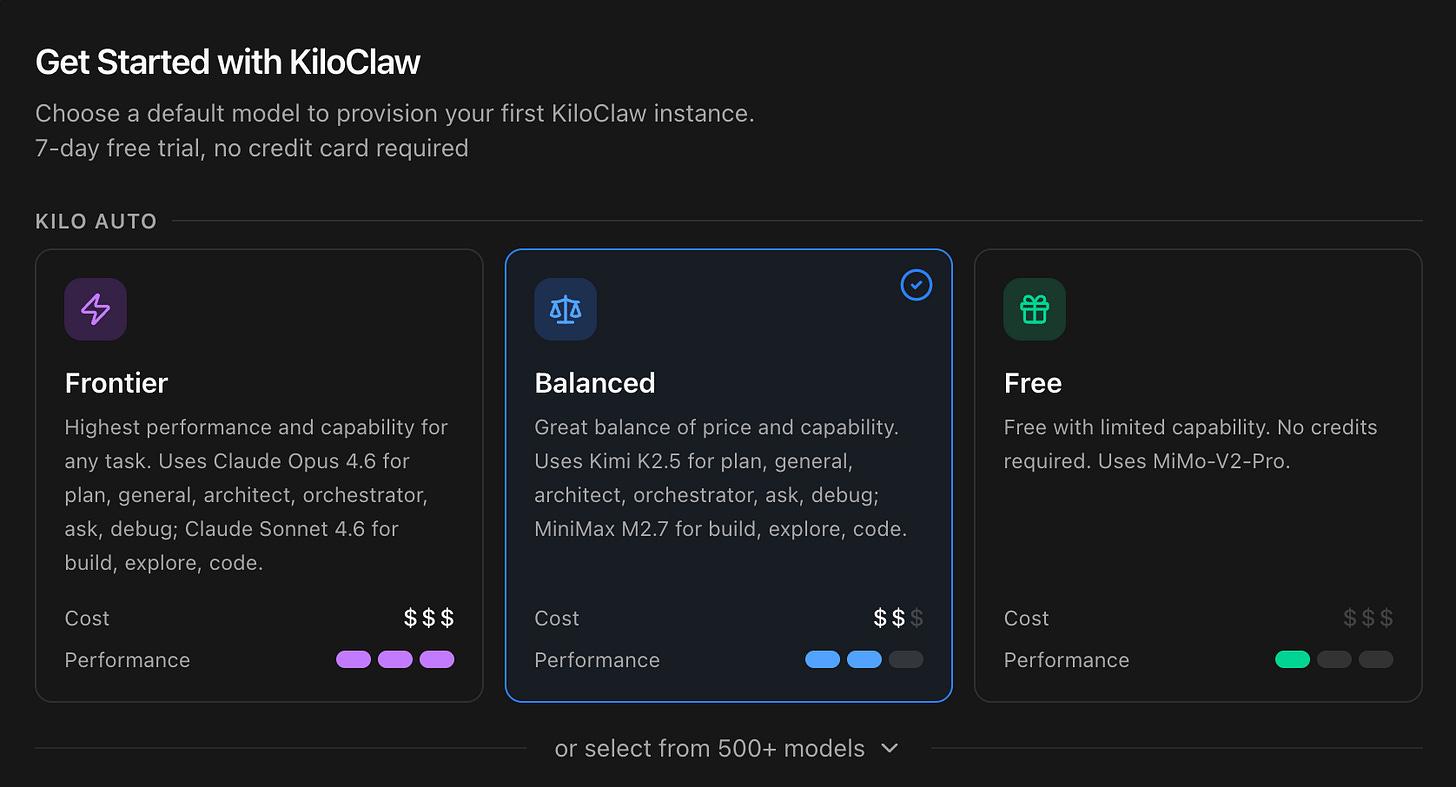

More importantly, the system keeps working after you stop. Sub-agents reduce waste, memory persists, and auto model routing can further decrease costs by 5-10x. Most agentic tasks don’t actually need the “best” model. With auto routing now available in different modes in Kilo, including in KiloClaw, you can pick a mode during onboarding and update at any time.

Looking to take advantage of high-efficiency models but super powerful models like Kimi K2.5 and MiniMax M2.7? Choose Balanced Mode and we’ll route between models for you.

Why “Agentic Engineering” Was Inevitable

This isn’t just a cost story. It’s a shift in how software gets built, whether that’s full production software for a new startup or your own personal AI assistant with something like KiloClaw.

We’re entering the era of agentic engineering—where multiple agents collaborate across planning, implementation, debugging, and deployment.

This isn’t hype. It’s already happening:

Code gets written, reviewed, and deployed in a single loop

Long-running tasks move into persistent cloud agents

Developers supervise systems instead of executing every step

The role of the developer is changing—from builder to orchestrator. And with OpenClaw the role of everyday AI users is changing too—from consumer to conductor.

And once that happens, cost behaves differently. Efficiency is no longer about a single request—it’s about how well the system runs over time.

Platforms that unify this workflow—IDE, CLI, cloud, and collaboration—don’t just improve productivity. They become the default interface for building software. This is what we’ve been building at Kilo since the beginning, and the rise of KiloClaw is just the next phase of this (very fast) evolution.

Check out PinchBench for the best OpenClaw benchmarks, and launch your own claw in minutes with Kilo! 🦀

Really strong reframing from token cost to cost-per-completed-task.

One thing that helped our team: for each agent run, we log just 5 fields (goal, model route, tool calls, failure/recovery, next test). That makes throughput claims auditable and quickly shows where “cheap” setups silently leak time.

If useful, we share practical OpenClaw run breakdowns like this at https://substack.com/@givinglab.