Live Q&A with Anthropic: Webinar Recap

We hosted a live roundtable session about Claude Opus 4.5. Here's how it went.

Two days after we hosted a webinar that drilled into the technical specs and features of Claude Opus 4.5 — just three hours after its launch — we did something different: we opened it up.

We sat down for a roundtable discussion on how Opus 4.5 is changing AI coding, and then brought on Marius from Anthropic’s Applied AI team to answer the questions that everyone has about the latest frontier AI model.

» Watch the full recording here: Live Q&A with Anthropic

Starter Topic: How different does AI coding feel now vs. a year ago?

Answer: A lot different.

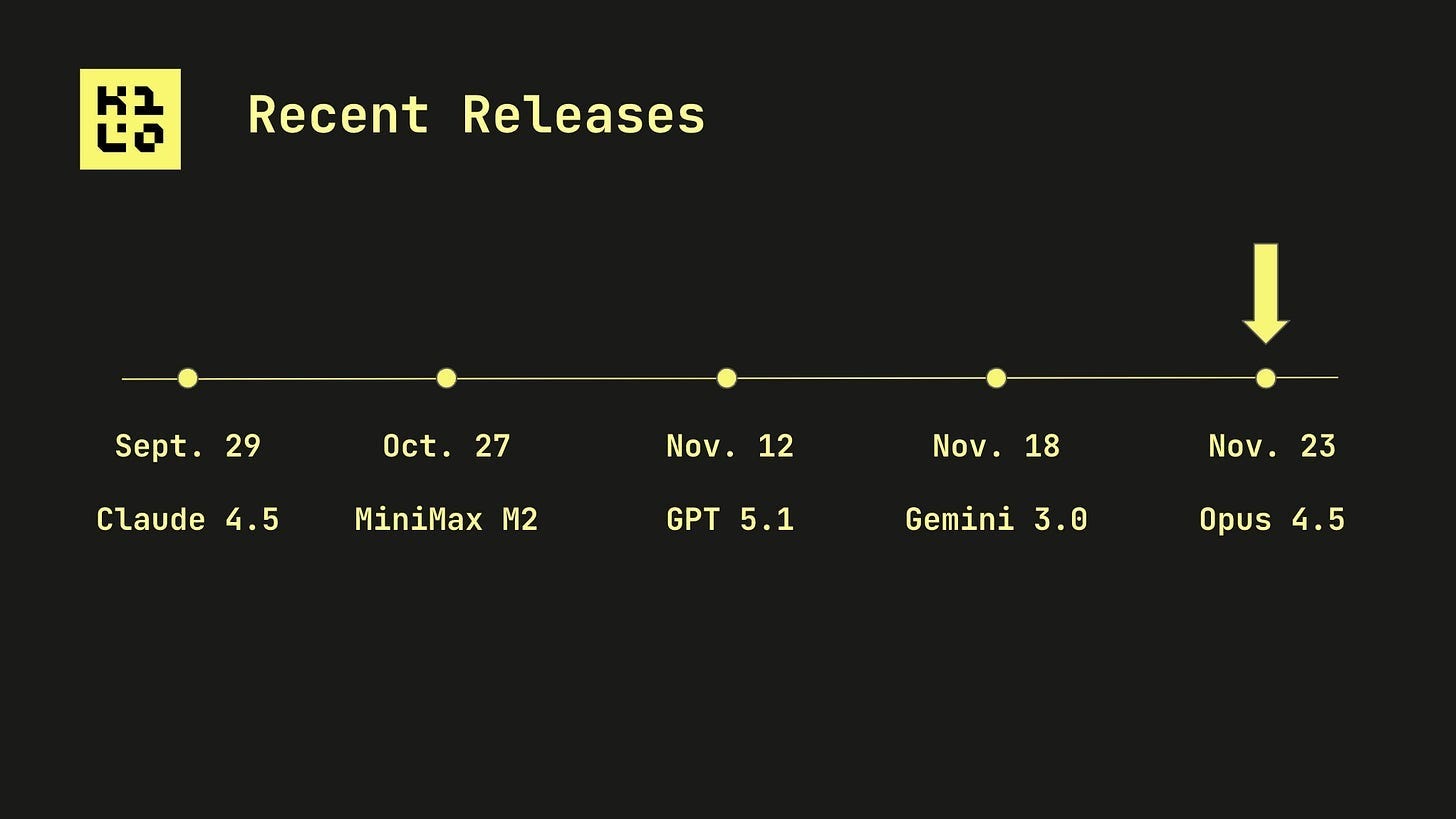

November 2024: Claude Sonnet 3.5 hit 49% on SWE Bench Verified (a benchmark testing real-world bug fixing).

November 2025: Opus 4.5 just broke 80%. First model ever to do so.

But it’s not just about the numbers. The models understand intent better now. A year ago, you had to be a prompt engineer to get anything useful. Now? It feels like the models just infer what you meant to say, even when you didn’t articulate it.

Front-end capabilities have exploded too. One prompt can now generate a working app with a unique, aesthetic UI, as opposed to the “AI-Slop” cookie-cutter stuff we were getting just months ago.

The paradox of choice

Here’s the flip side: we’re drowning in new models.

A new frontier model drops nearly weekly. Which one do you use? When? How do you evaluate them when benchmarks like SWE Bench start maxing out?

Brendan from the Kilo team nailed it:

“Your expertise in specific syntax matters less now. Your expertise in the engineering part of software engineering matters more.”

Architecture. Steering. Knowing what you want. That’s what separates good AI-assisted code from bad.

Junior developers, senior leverage, and the sweet spot

We talked about treating AI agents like junior developers: eager, tireless, but sometimes confidently wrong.

Question: What does Opus 4.5 mean for actual junior devs? For engineering leaders managing teams?

Answer: Syntax becomes less important. Engineering judgment becomes everything.

As an example, Simon Willison and Theo from T3 both used Opus 4.5 to refactor major codebases. The model didn’t replace them, but instead amplified their senior-level decision-making. They knew what they wanted. The model just executed faster and more accurately than before.

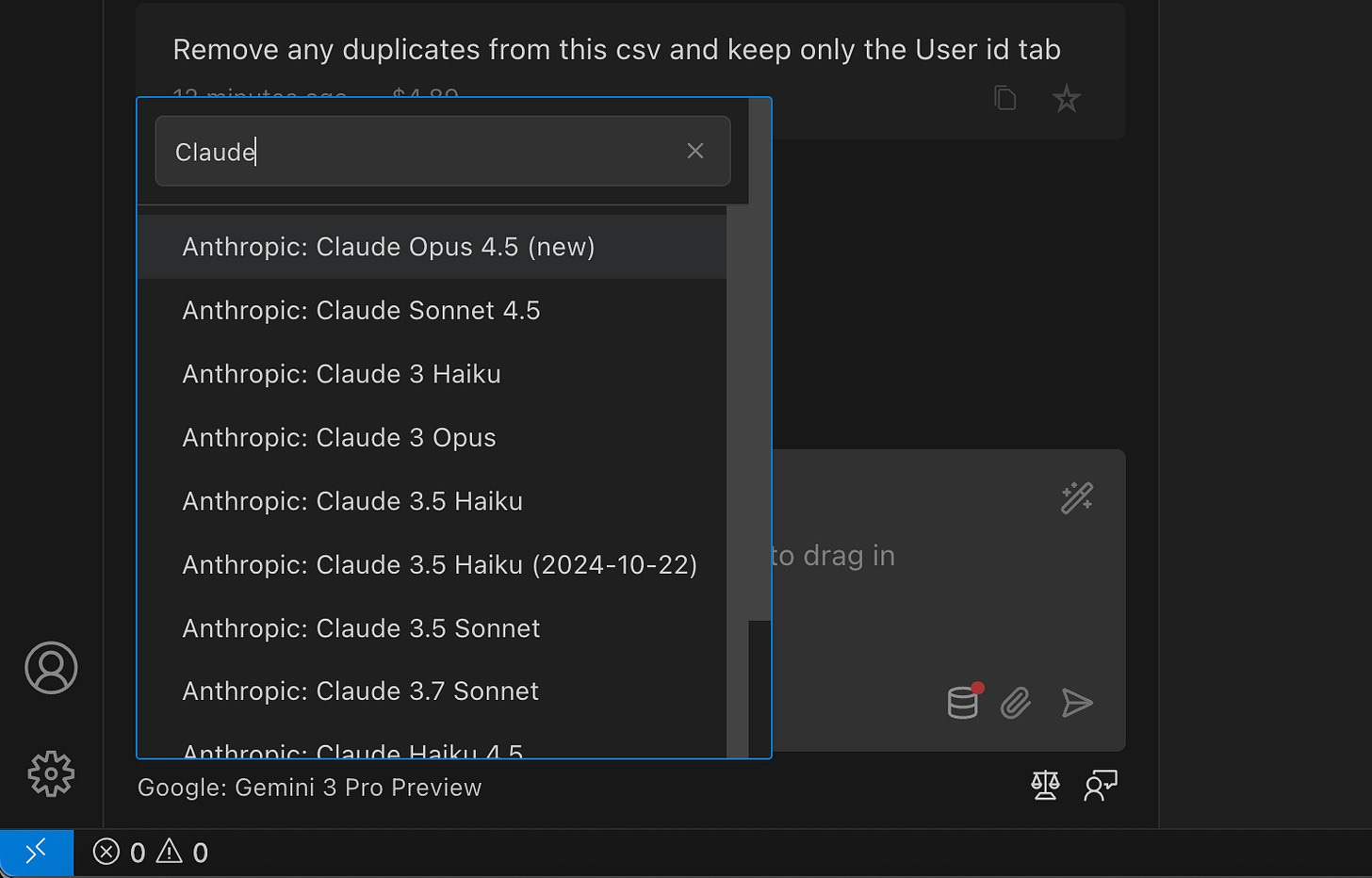

When to use Opus vs. Sonnet vs. Haiku

Our take: Use Opus for planning. Use less expensive models for execution.

Frontier models like Opus 4.5 are incredible at long-horizon reasoning and making smart decisions. But once you have a solid spec, cheaper models can implement it just fine.

Marius’s rule of thumb: Start with Opus to see what the task costs, not just what the prompt costs. Token efficiency over the long run often makes the pricier model worth it.

And if you’re building agents? Lean into Opus. The smarter upfront decisions mean fewer turns, which saves tokens overall.

Inside look: What Anthropic is thinking about

Marius joined us for a Q&A, and here’s what stood out:

On long-horizon tasks:

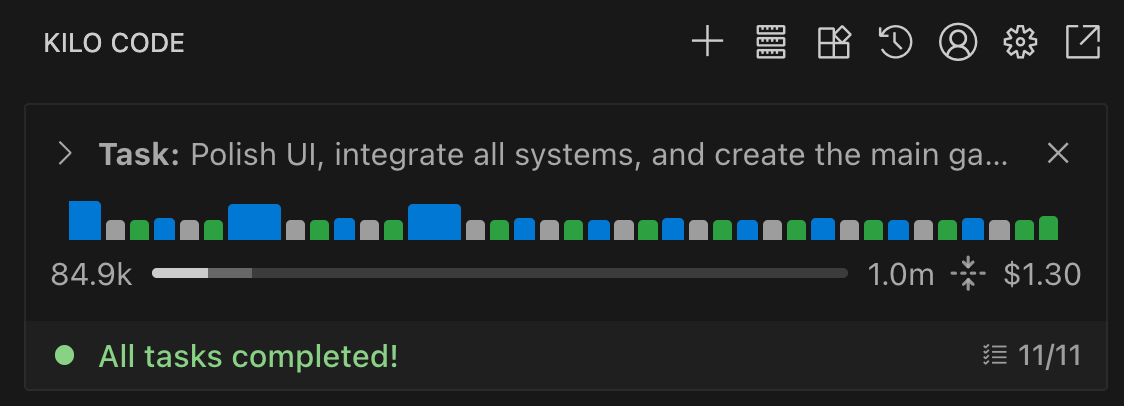

We managed a 30-hour coding session with Sonnet 4.5. Opus did even better. The goal is a world where you kick off a task and it just... gets done.

On the 200K context window:

It’s not a ceiling, it’s just sequencing. The 1M token context is likely coming.

On new features:

Advanced tool use (programmatic tool calling, tool search) is a game-changer for agents dealing with high-volume data. No more polluting context windows with massive logs or metrics.

On the future:

Maybe we’re all becoming Engineering and Product Managers? Maybe we’re becoming tastemakers of what feels good.

A fun fact:

Opus 4.5 cracked Anthropic’s own senior performance engineer take-home test. Better than any human candidate. Which is... slightly concerning, but mostly exciting! 😄

The questions you asked

We got a bunch of great questions from the audience. Here are a few highlights:

Q: How should we prompt Opus 4.5?

» Clean up your prompts for Opus 4.5. It’s more aligned, follows intent better, but if you have contradictory instructions, it’ll try to follow them all and may fail.

Q: How can we reduce costs on large tasks?

» Break them down. Don’t throw a massive, vague prompt at a frontier model and hope for the best. Be specific. Point it in the right direction.

Q: When should we use Orchestrator vs. Architect mode?

» Depends on your workflow! Architect creates the plan. Orchestrator distributes the task across multiple agent modes.

Q: Does Claude 4.5 Opus bring any advances in the construction of autonomous agents, especially in flows where multiple tools and APIs are integrated?

» Opus 4.5 offers major improvements regarding the ability to construct autonomous agents. You can see how Anthropic benchmarks on agentic capabilities here. Tool search, programmatic tool calling, and the new effort feature are some significant upgrades to call out.

What’s next

We’re working on some more content about the minimum understanding that developers need on AI models to do their jobs well. You don’t need to wake up every day worrying about what a new model means for your experience, especially inside Kilo Code, where you can change models without changing your workflow.

But it is helpful to know the basics: What’s this model good at? How long can I let it run before checking in? What’s the right tool for this specific job?

We’ll be here to help inform you every step of the way.

P.S. We gave away $100 of credits to 5 attendees. Congratulations to those who won:

Jan K. 🇮🇪

Mazen B. 🇨🇦

Phillip B. 🇺🇸

Olavi A. 🇪🇪

Benjamin P 🇩🇪

Stay tuned for announcements on future events!