AI Consulting Wins When It Embraces Model Freedom

Picking the right LLM for the job has never been more important

The most interesting AI news this month isn’t a model release. It’s that the biggest AI companies are quietly becoming (big) consulting companies.

Both OpenAI and Anthropic are moving aggressively into consulting and enterprise deployment. OpenAI is reportedly building a massive “Deployment Company” alongside private equity firms and global consultancies, valued at $14 billion right out of the gate. Anthropic just launched its own enterprise services venture backed by Blackstone, Goldman Sachs, and Hellman & Friedman.

And Google recently announced a $750 million fund to help consultants adopt AI. Citing large firms such as Accenture and McKinsey, it’s a more measured approach than launching a full consultancy themselves, but it’s still no small play. The stakes are high and everybody wants a piece of the prize.

This is not a side story. It’s the clearest signal yet that AI adoption is not going to be won by APIs alone.

But what happens if the big players start offering consultative solutions that are really just designed for single vendor lock-in?

Everyone in AI is Now an AI Consultant (And That’s a Good Thing)

The model landscape now changes so fast that “best practices” can become outdated overnight. For the past two years, the dominant narrative around AI has been software margins, automation, and “replace labor with models.” But the frontier labs themselves are now acknowledging something important: AI adoption is still profoundly human work.

In practice, almost everyone serious about AI has become a consultant now. If you’re a builder, you’ve never been more valuable.

That includes founders. Engineers. Open-source maintainers. Partnership teams. Developer advocates. Even power users inside enterprises.

A surprising amount of modern AI work looks less like traditional SaaS deployment and more like a constant stream of config sessions, workflow redesigns, stack evaluations, and internal education. And that’s in addition to the strategy sessions.

When we run a KiloClaw configuration session with a developer team, the conversation rarely starts with a single model anymore. It starts with tradeoffs, or, more accurately, trade-ups.

Which model is best for coding right now? Which one handles long-context reasoning better? Which one is cheapest at scale? Which one works best for agents?

Whether or not they ask those kinds of questions will define whether these new consultants help you increase ROI and actually save money, not just max out token spend. Management consultants have always centered their value proposition on efficiency and cost-cutting, not just bigger spending, which means the focus on model optimization is a core part of the AI adoption playbook. McKinsey, for example, has long cited one of their key benefits as helping companies make cost saving continuous for years to come.

And this focus extends to internal work, too. Boston Consulting Group has internally deployed over 36,000 custom GPTs across its 32,000 consultants worldwide, signaling a massive effort to operationalize and optimize AI for knowledge work.

Claude Opus is immensely powerful. But it’s no secret that it can also get very expensive–very fast. Opus fits into key places in a workflow—we’re using it now in our Auto Frontier Model, which automatically picks the best model for the job within set criteria. But if you’re serious about optimizing ROI over time, you should be looking at a much broader spectrum of models and providers, including but not limited to OpenAI and Anthropic.

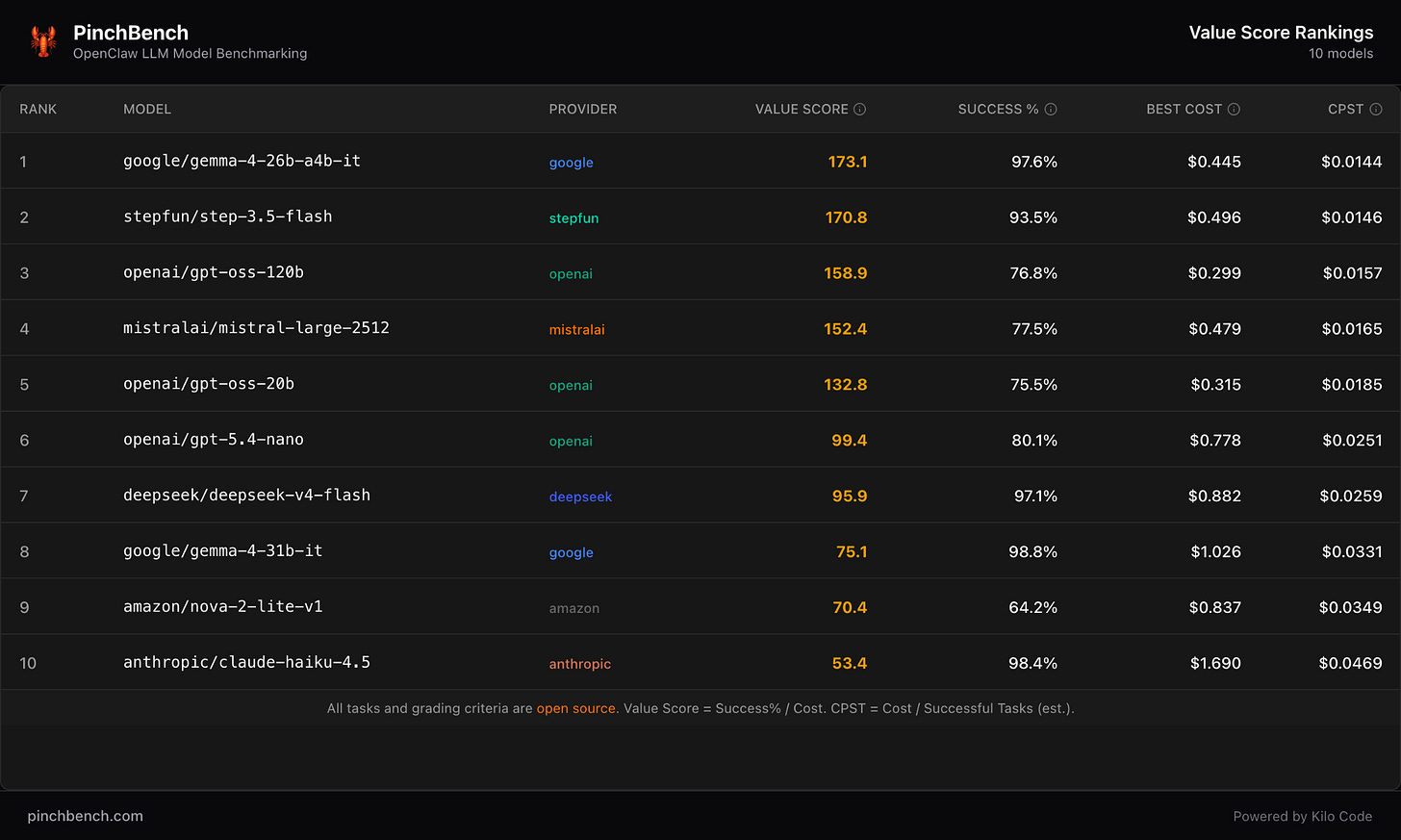

Just look at the recent PinchBench data on value scores for agent work. New models from Stepfun and DeepSeek are giving Claude Haiku a run for its money. They aren’t necessarily better but they might be the right fit for your daily agentic work.

The same thing happens in workshops with teams frustrated by rising Cursor pricing and exploring alternatives.

The opportunity is enormous. But so is the complexity.

Trading Vendor Lock-in for Smarter Workflows

The rise of AI consulting is good news. It means companies finally understand that “buying a model” is not the same thing as becoming AI-native.

The “big five” consultancies didn’t become giants because businesses lacked software. They became giants because transformation is messy, political, and operational. AI is no different — arguably it’s even more complex, in part because it’s touching on all of the systems as well as the models and agents that link them together. That’s the orchestration layer.

The smartest AI consulting firms won’t behave like reseller channels for a single lab. They’ll look more like intelligence infrastructure advisors. Part strategist, part systems integrator, part workflow architect, part educator.

Because, let’s be honest, they’ll be learning as much as you are. AI is moving so fast that it has to be developed in tandem with customers.

And increasingly, consultants’ value won’t come from access to, or understanding of, a particular model or model family. It will come from helping organizations navigate constant change without rebuilding their stack every six months. They’ll behave like strategic orchestrators across a constantly shifting model landscape.

That’s what we’ve been building for at Kilo—the option to always have the best model for the job right at your fingertips—and it’s also what our lab and inference partners are increasingly building for, as they release both strikingly efficient SOTA models (like GPT-5.5) and sneaky little powerhouses like the Xiaomi MiMo models and Ant Group’s Ling and Ring models, optimized for agentic tool calling. Flexible tools to fit any workflow, so that even as the model landscape evolves your workflows can travel with it.

The winners in the new AI consulting race won’t look like traditional consultancies. They’ll likely be:

smaller

faster

deeply technical but highly creative

open-source native

model-agnostic

agent-first

and capable of shipping real systems instead of just decks

I’m not discounting the frontier labs. But the initial consultancy offerings they’ve been announcing will need to break down into micro-consultancies to be successful — in many ways mirroring what the frontier labs’ enterprise sales teams and “forward-deployed engineers” have already been doing. Every vertical counts.

The best consultants may not even call themselves consultants. They’ll look more like hybrid studios, deployment partners, infra operators, and ultimately the account managers at the AI tools in your stack.

That’s a healthier ecosystem. And for the consultees to succeed as much as the consultants, that ecosystem will have to be based on model freedom.