The Elephant is Out of the Bag: Meet Ant Group's Ling-2.6-flash

Free in Kilo for another full week

A short time ago, we announced Elephant, a 100B-parameter stealth model from a prominent open model lab.

The response from the Kilo community was fantastic. Across coding tasks, complex document parsing, and dynamic agentic workflows, your feedback was incredibly consistent. Elephant was extremely fast and capable. The speculation immediately took off on X and Discord. Was it a new proprietary model from a well-known tech giant? A highly tuned open-source derivative? A completely new architecture?

Today, it is time to address the Elephant in the room and take off the mask.

We’re excited to officially reveal that the stealth model you’ve been using in your day-to-day coding workflows and agentic assistants is none other than Ant Group’s Ling-2.6-flash.

After all, you can’t spell Elephant without ant!

By releasing Ling-2.6-flash under a pseudonym, we wanted to let the model’s performance speak entirely for itself, free from any pre-existing brand bias or market expectations. The community’s blind tests confirmed what we already suspected: this model is an absolute powerhouse for developers building next-generation AI applications. With super fast inference from Novita, it was a win-win.

But the Kilo community didn’t just test Elephant—you actively helped refine how it operates. During the stealth phase, we received some absolutely great community PRs improving the system prompts and fine-tuning the integration. Thanks to your collaborative optimizations, the model’s performance on Kilo has been pushed even further, unlocking better instruction adherence and sharper contextual reasoning.

So, what exactly is under the hood of the model formerly known as Elephant?

Here is the official description of the newly unmasked powerhouse:

Introducing Ling-2.6-flash, an Instant model with 104B total parameters and 7.4B active parameters, built for real-world agents to deliver fast responses, strong execution, and high token efficiency—matching SOTA-class performance at similar scale while significantly reducing token usage across coding, document processing, and lightweight agent workflows.

This unique architectural balance is what makes it so incredibly agile. In an era where AI agents are expected to operate autonomously, process massive context windows, and return actionable code in milliseconds, Ling-2.6-flash hits the exact sweet spot of intelligence and speed. You get the deep reasoning capabilities of a massive 104B parameter model, paired perfectly with the low latency and cost-effectiveness of a highly active, focused 7.4B network.

Many are familiar with Ant Group’s trillion-parameter model released at the end of 2025—Ling-1T—which seemed designed to compete directly with DeepSeek-V3. This flash model is an intriguing refinement of those capabilities.

A major driver behind this agility is how the model was trained. Ant Ling models are specifically designed with Agentic RL (Reinforcement Learning). Because of this agent-first foundation, Ling-2.6-flash is fully compatible with OpenClaw. The best way to see how this works is to use KiloClaw, our hosted OpenClaw that’s faster, easier and safer than anything else on the market. This empowers the model to go far beyond simple text generation and seamlessly handle complex agentic workflows, seamlessly executing terminal operations, managing dynamic GUI interactions, and coordinating sophisticated tool calls.

Celebrate the Reveal: Free Ling-2.6-flash for an Entire Week

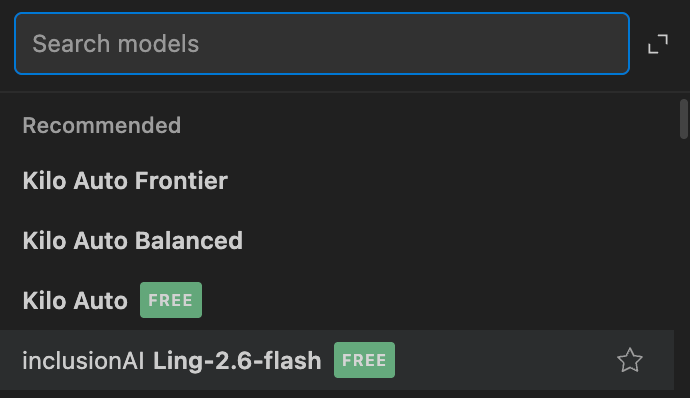

To celebrate this unmasking and thank you for your incredible contributions, we want to make sure everyone has the opportunity to experience the raw power of Ling-2.6-flash without any friction. You’ll find it under the inclusionAI moniker, which is the name of Ant Group’s Artificial General Intelligence (AGI) initiative.

Starting right now, Ling-2.6-flash is completely free to use in Kilo Code and KiloClaw for an entire week—with absolutely no limits. That’s right. No rate limits holding back your automated agent loops, no token caps on your massive document processing tasks, and no paywalls stopping your late-night coding sessions. Whether you are building an autonomous research agent or a personal AI assistant with KiloClaw, we’ve got you covered.

The Elephant is out of the bag 🐘

We can’t wait to see what you build with Ant’s Ling-2.6-flash during this unlimited free week. Log into Kilo now, and let your agents loose!

Kilo, good job bringing this model. Are there any objective benchmarks? Maybe I missed them?

This model is not all good, beats every other model in hallucination though