We analyzed how much Kilo Code Reviewer costs on real-life coding tasks

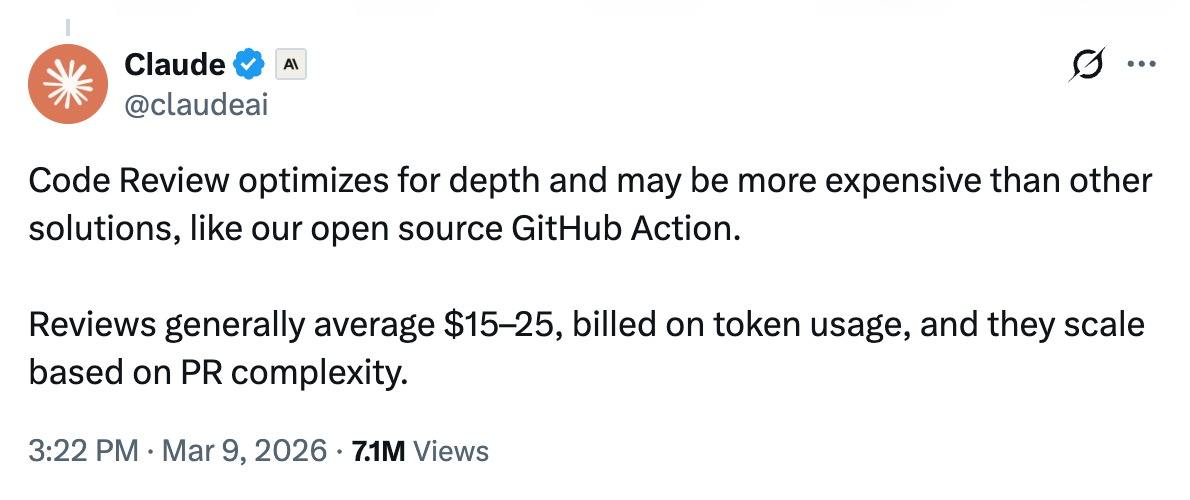

AI code review is going mainstream. Anthropic recently launched Code Review for Claude Code, a multi-agent review system for GitHub PRs. It optimizes for depth, and a single review can cost between $15-25:

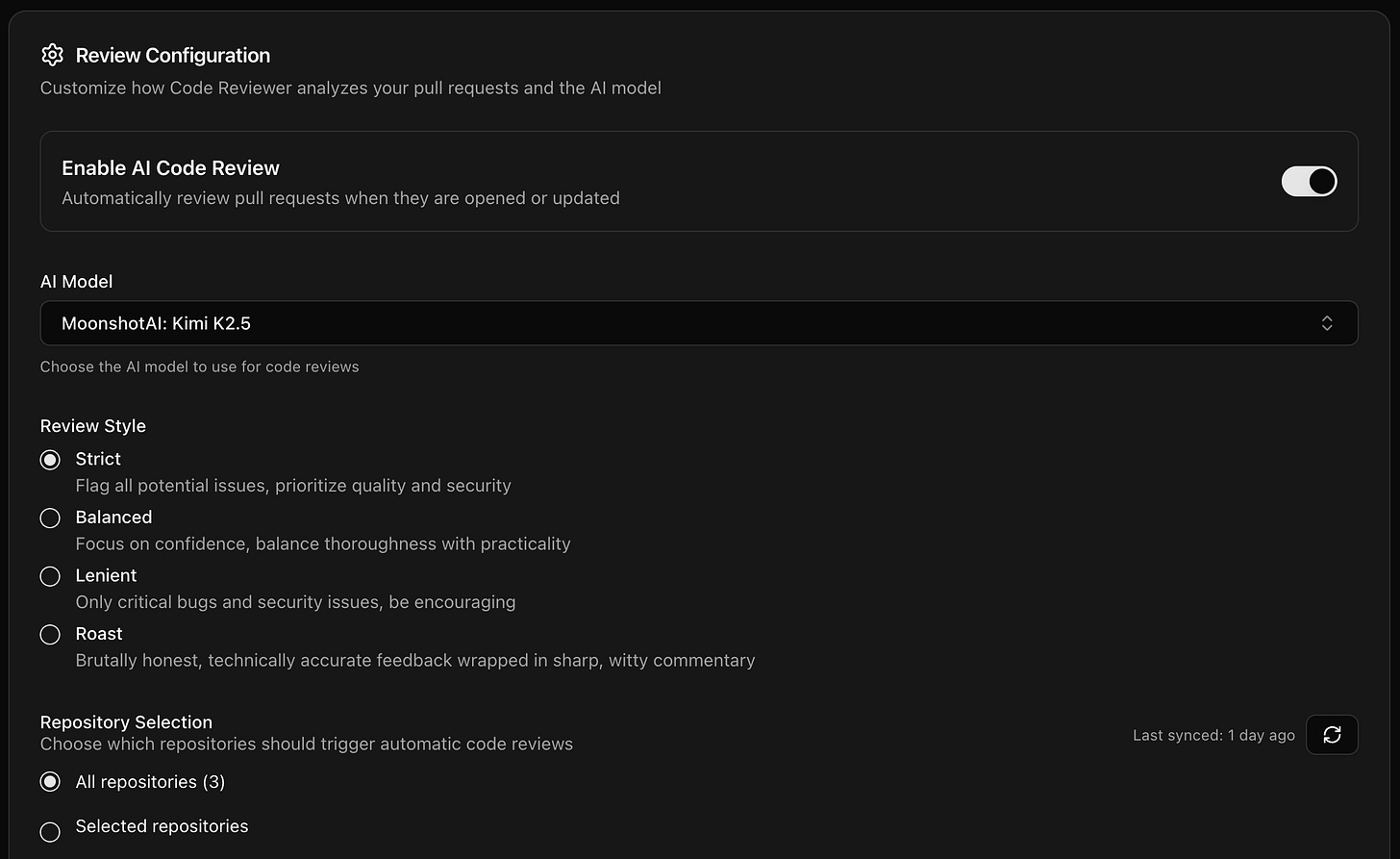

We’ve had Kilo Code Reviewer for a while now and one thing people love about it is the ability to choose between different models.

In this post, we’ll focus on something else: cost. We we will run Kilo Code Reviewer on real open-source PRs with two different models and track every token and dollar.

The Setup

For this test we wanted to get as close as possible to real-world examples, so we used actual commits from Hono, the TypeScript web framework (~40k stars on GitHub).

We forked the repo at v4.11.4 and cherry-picked two real commits to create PRs against that base:

Small PR (338 lines, 9 files): Commit 16321afd adds

getConnInfoconnection info helpers for AWS Lambda, Cloudflare Pages, and Netlify adapters, with full test coverage. Nine new files across three adapter directories.Large PR (598 lines, 5 files): Commit 8217d9ec fixes JSX link element hoisting and deduplication to align with React 19 semantics. Five files with 575 insertions and 23 deletions, including 485 lines of new tests.

Both are real changes written by real contributors and both shipped in Hono v4.12.x.

We created duplicate branches for each PR so we could run the same diff through two models at opposite ends of the spectrum:

Claude Opus 4.6, Anthropic’s current frontier model and one of the most expensive options available in Kilo Code Reviewer.

Kimi K2.5, an open-weight MoE model from Moonshot AI (1 trillion total parameters, 32 billion activated per token) at a fraction of the per-token price.

Both models reviewed the PRs with Balanced review style and all focus areas enabled.

Cost Results

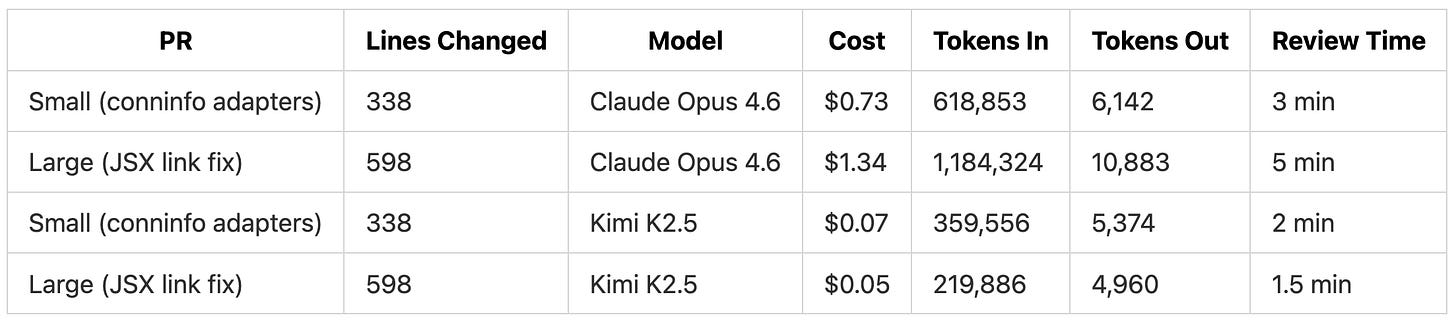

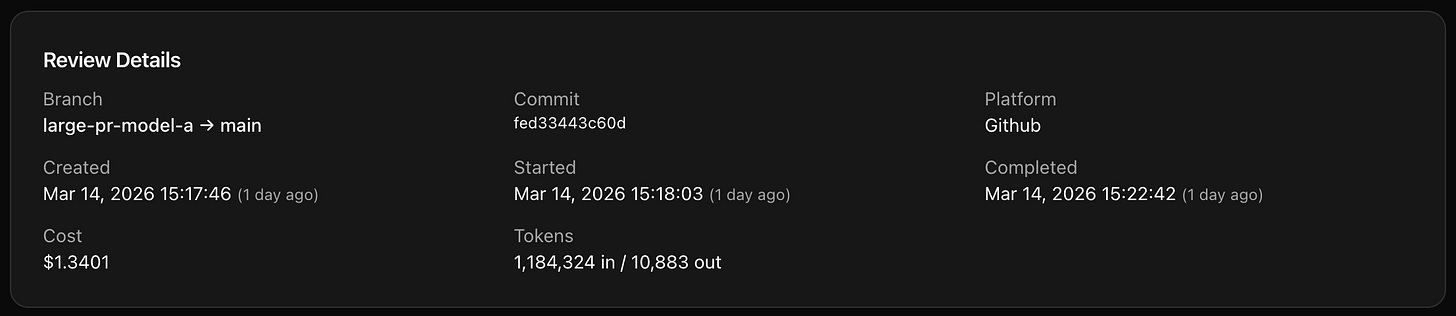

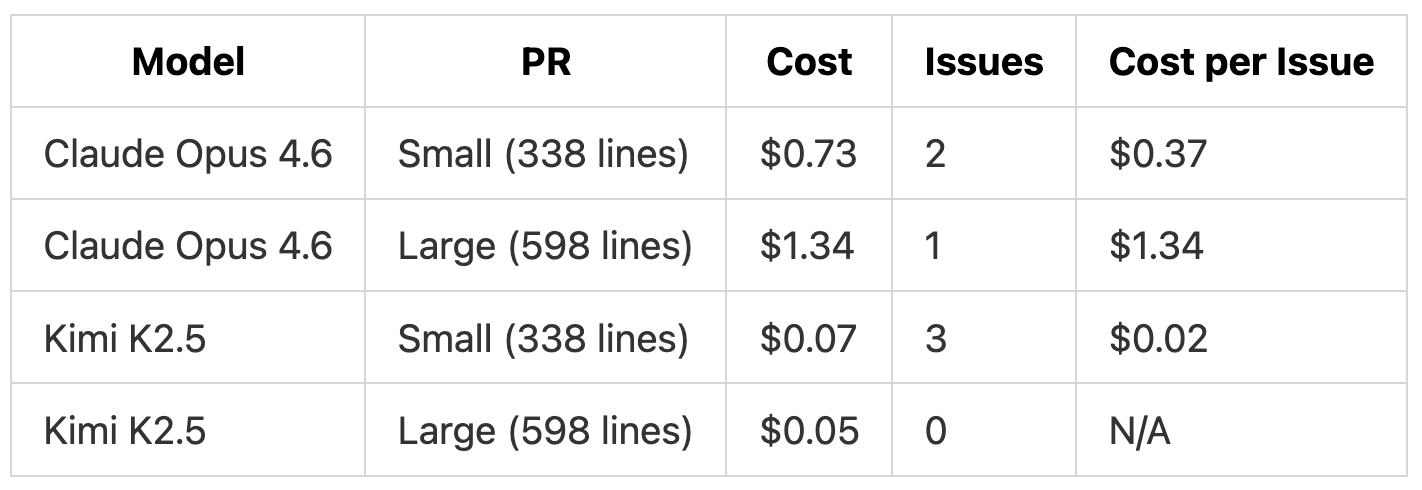

The most expensive review we ran was Claude Opus 4.6 on the 598-line PR. That cost $1.34.

Let’s dive deeper.

Breaking Down the Token Usage

Kilo Code Reviewer work by dispatching agents that read the diff, then pull in surrounding files for context. Different models approach this differently, and the token usage across our four reviews shows how.

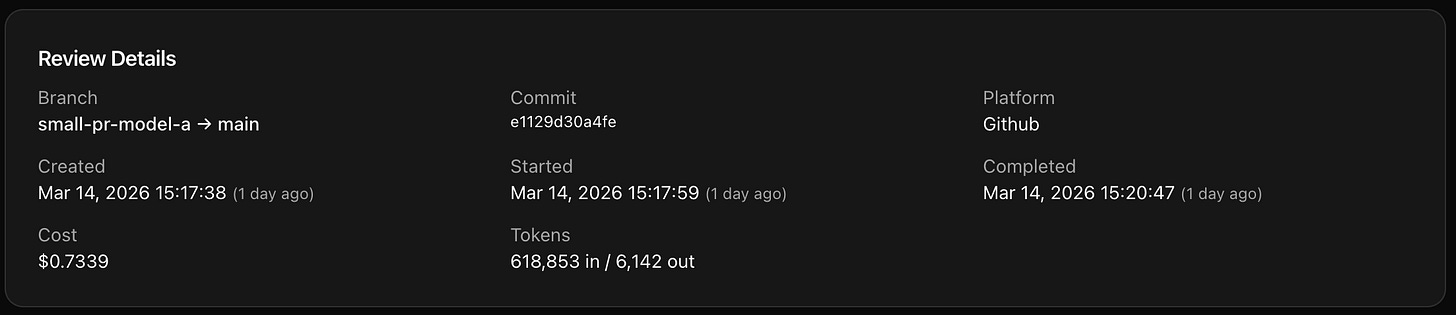

1. Small PR (338 lines). Opus 4.6 used 618,853 input tokens. Kimi K2.5 used 359,556 on the same diff. That’s 72% more input tokens for the exact same code change.

The output tokens were similar (6,142 vs 5,374), so the difference is almost entirely in how much surrounding code the review agent pulled in. Opus 4.6 pulled in the existing handler.ts file to understand what event types the Lambda adapter supports, which is how it caught the missing LatticeRequestContextV2 type. Kimi K2.5 stayed closer to the diff and missed that issue.

2. Large PR (598 lines). Opus 4.6 consumed 1,184,324 input tokens (5.4x more than Kimi K2.5’s 219,886). Opus 4.6 pulled in more of the JSX rendering codebase to understand how the existing deduplication logic worked before evaluating the changes. Kimi K2.5 did a lighter pass and found no issues.

What Drives the Cost

The cost of a review comes down to three factors.

1. Model pricing per token.

Claude Opus 4.6 costs $5 per million input tokens and $25 per million output tokens.

Kimi K2.5 costs $0.45 per million input tokens and $2.20 per million output tokens.

That’s roughly a 10x difference in per-token price, and it’s the biggest cost driver.

2. How much context the agent reads. The review agent doesn’t only look at the diff.

It pulls in related files to understand the change in context.

Different models approach this differently, and some read far more surrounding code than others:

Opus 4.6 read 618K-1.18M input tokens across our two PRs.

Kimi K2.5 read 219K-359K.

More context means more tokens means higher cost.

3. PR size. Larger diffs mean more code to review and more surrounding context to pull in.

Our 598-line PR cost 83% more than the 338-line PR with Opus 4.6 ($1.34 vs $0.73).

With Kimi K2.5, the large PR actually cost less than the small one ($0.05 vs $0.07), likely because the agent did a lighter pass on the well-tested JSX changes.

Cost per Issue

Another way to look at the data is cost per issue found.

On the small PR, Kimi K2.5 found more issues at a lower cost per issue ($0.02 vs $0.37). But the nature of the findings was different. Opus 4.6 found issues that required reading files outside the diff (the missing Lattice event type, the XFF spoofing risk). Kimi K2.5 focused on defensive coding within the diff itself (null checks, edge cases).

On the large PR, Opus 4.6 found one real issue for $1.34. Kimi K2.5 found none for $0.05.

Both of these commits shipped in Hono v4.12.x. The issues that Opus 4.6 found (the missing Lattice event type, the potential TypeError in shouldDeDupeByKey) are in the actual released code. A $1.34 review that catches even a small bug before it ships to production pays for itself many times over in avoided debugging time and potential incident response.

Cost per issue varies by PR. Clean, well-tested code (like the JSX fix with 485 lines of tests) produces fewer findings regardless of model. Code with more surface area (like the multi-adapter PR touching three cloud providers) produces findings from both models, just at different depths.

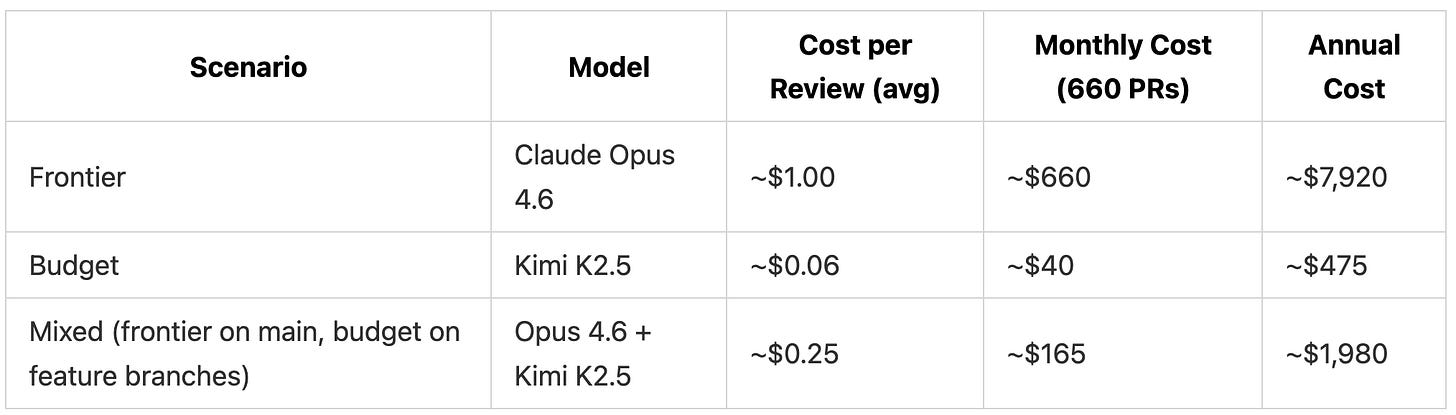

Monthly Cost Assuming Average Team Usage

We modeled three scenarios based on a team of 10 developers, each opening 3 PRs per day (roughly 660 PRs per month)

The frontier estimate uses the average of our two Opus 4.6 reviews ($1.04). The budget estimate uses the average of our two Kimi K2.5 reviews ($0.06). The mixed approach assumes 20% of PRs (merges to main, release branches) get a frontier review and 80% get a budget review.

What You Get at Each Price Point

Cheaper models find fewer issues. Here’s what each model found on the same code.

Claude Opus 4.6 on the small PR ($0.73) found 2 issues. It caught a missing type in the LambdaRequestContext union (the LatticeRequestContextV2 event type wasn’t handled) and flagged that X-Forwarded-For header parsing trusts the first IP, which can be spoofed behind a load balancer. Both findings required understanding code outside the diff.

Kimi K2.5 on the small PR ($0.07) found 3 issues. It caught a missing null check on c.env, an edge case where an empty X-Forwarded-For header produces an empty string instead of undefined, and flagged an unnecessary test assertion. It also noted issues outside the diff, including that test files use random values instead of realistic IPs and that the Netlify adapter has useful geo data it doesn’t expose.

Claude Opus 4.6 on the large PR ($1.34) found 1 issue. It identified that the exported shouldDeDupeByKey function would throw a TypeError if called with an unexpected tag name, since deDupeKeyMap[tagName] returns undefined for unknown keys. The review also included a summary explaining the PR’s purpose and how the changes align with React 19 behavior.

Kimi K2.5 on the large PR ($0.05) found 0 issues and recommended merging. The review summary correctly described what the PR does and noted that the test coverage was thorough.

On the small PR, both models found real issues, though different ones. Opus 4.6’s findings came from reading files outside the diff (understanding the Lattice event type from a separate file). Kimi K2.5 focused on defensive coding patterns within the diff itself and found more issues by count (3 vs 2), though at lower severity.

On the large PR, the gap was clearer. Opus 4.6 caught a real potential TypeError that Kimi K2.5 missed entirely.

What This Means for Choosing a Model

The model you pick for code reviews depends on what you’re optimizing for.

If you want maximum coverage on critical PRs, a frontier model like Claude Opus 4.6 reads more context and catches issues that require understanding code outside the diff. Our most expensive review was $1.34 for a 598-line PR.

If you want cost-efficient screening on every PR, a budget model like Kimi K2.5 still catches real issues at a fraction of the cost. Our cheapest review was $0.05. It won’t catch everything, but it provides a baseline check on every change for practically nothing.

If you want both, you can switch models in Kilo Code Reviewer depending on the PR. Use a budget model for day-to-day feature branch reviews and switch to a frontier model when merging into main or cutting a release. Our mixed scenario estimate was ~$165/month for 660 PRs.

The free models we tested in our previous code review post (Grok Code Fast 1, MiniMax M2, Devstral 2) are another option. Grok Code Fast 1 caught 100% of planted security vulnerabilities and matched Claude Opus 4.5’s detection rate in that test. Free models cost $0 per review during their beta period.

Conclusion: Frontier models read more context, catch deeper issues, and cost more. Budget models catch surface-level issues quickly and cheaply. But even at the frontier end, a $1.34 review that catches one bug before it ships to users pays for itself many times over in avoided debugging, hotfixes, and incident response. At $0.05-$1.34 per review, the math works out regardless of which model you pick.

Testing performed using Code Reviewer, a product area of Kilo.

Way to go : ) These are the things I like to read.