PinchBench 2.0 is here

148 tasks, parallel judging, thinking-level support, and a brand new leaderboard

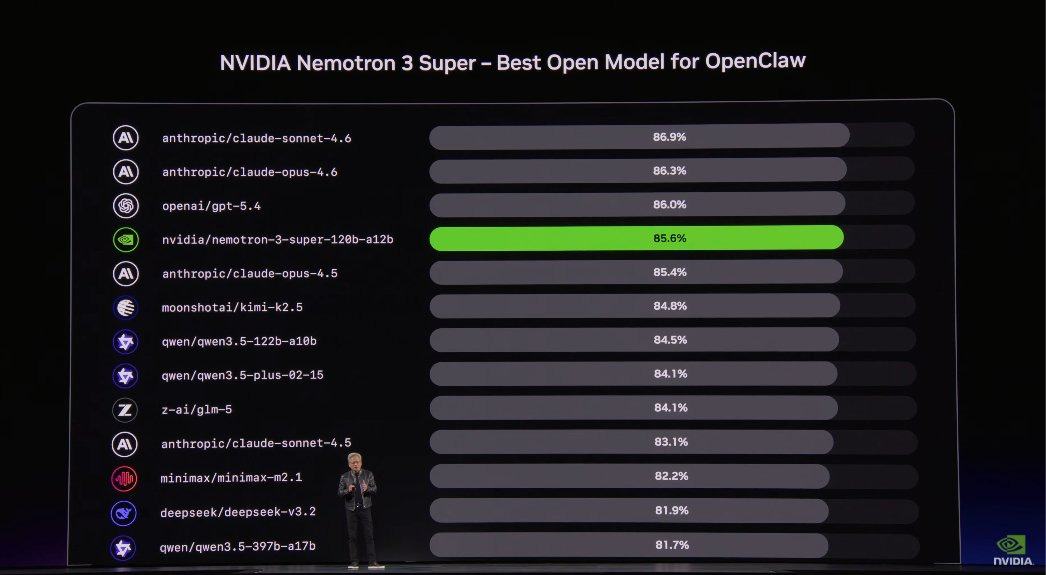

When Jensen Huang put PinchBench on screen at GTC, I had a moment of genuine “oh s***, people are actually using this.” What started as me wanting to know which model to run on my OpenClaw setup has become the reference benchmark for evaluating AI coding agents in real-world workflows. That comes with responsibility.

(”oh s***” obviously means “oh snap” … what did you think it was?)

Today we’re shipping PinchBench 2.0. Here’s what changed and why.

The problems with v1

V1 worked by running 23 tasks, grading them, and giving you a score. But it had shortcomings that became obvious as usage scaled:

Scoring was gameable. The leaderboard ranked by mean score across completed tasks—but didn’t account for how many tasks you ran. An agent that cherry-picked 1 easy task and scored 100% would outrank one that ran all 23 and scored 94.8%. Broken.

At least one task was basically impossible. A 95% failure rate across 90% of models isn’t testing capability—it’s wasting compute. We fixed or pulled those.

Version tracking was opaque. We used git commit hashes to identify benchmark versions. Users saw strings like a1b2c3d in the version dropdown and had no idea what changed between them.

Race conditions in grading. The benchmark assumed that when the OpenClaw agent subprocess returned, the transcript was complete. It wasn’t always. Grading could start on a partial transcript, producing silently wrong scores.

148 tasks (up from 23)

We ran a gap analysis comparing what PinchBench tested against what real OpenClaw users actually do (based on 780+ ClawBytes on kilo.ai/kiloclaw). Then we filled the gaps:

Data analysis — CSV tasks covering US cities, iris flowers, temperature, life expectancy, weather stations, GDP, pension funds, Apple stock

Meeting & document analysis — Government meetings, advisory boards, city council, tech meetings; executive summaries, sentiment analysis, action items, Q&A extraction

Log analysis — 24 new tasks across NGINX, Apache, SSH, HDFS, MapReduce, and syslog

Development & DevOps — CI/CD debugging, Kubernetes issues, Dockerfile optimization, multi-file refactoring, test generation, commit message writing, git rescue, shell generation

Image & PDF — Image identification, PDF to calendar import

Research & writing — Market research, email drafting, Todoist cleanup, contract/legal analysis

The call for contributors we opened in March brought in proposals from community members building browser automation tasks, test generation scenarios, and more. 111 commits from the community landed between v1.2.1 and v2.0.0.

Parallel judge execution

Grading no longer waits for all tasks to finish. The judge now overlaps with task execution, so your benchmark run completes faster. We also switched to Haiku as the default judge — faster grading without sacrificing accuracy — and added judge result caching so re-runs don’t redundantly re-grade unchanged results.

Thinking-level support

v2 supports testing across different reasoning/thinking levels and reporting scores for each. A model’s performance at “low” thinking versus “high” thinking tells you something different than a single aggregate number. For cost-conscious users choosing between reasoning modes, this data matters.

Multi-turn session isolation

Tasks can now specify new_session: true for proper multi-turn evaluation. This means benchmarking conversational workflows (where context carries across turns) without session bleed between tasks.

Semantic versioning

We migrated from git hashes to proper semver across the entire stack. The version now comes from GitHub releases via setuptools-scm for pip installs and a BENCHMARK_VERSION file for everyone else. The leaderboard shows 2.0.0 instead of 7df28f6. All existing git-hash versions got backfilled as 1.0.0-beta.N so sorting still works. I implemented it all with Gastown from Kilo.

Leaderboard overhaul

Scoring fairness. Runs are normalized by task count so comprehensive runs are rewarded, not penalized.

Per-task variance and retry counts. A model scoring 0.85 with std dev 0.05 is more useful than one scoring 0.90 with std dev 0.25. Consistency scores, first-try success rates, and per-run breakdowns are now surfaced.

Model landing pages. Each model gets its own page with all submissions, score trends over time, cost and speed metrics.

User profiles and contributor recognition. A contributor leaderboard alongside the model one.

Better filtering. Categorized provider filters, search, checkbox selections, and task-level filtering so you can compare models on just the tasks you care about.

Badges. Daily, weekly, and monthly recognition for top-performing models, plus overall rank badges for the top 10.

Infrastructure improvements

--coreflag for running a core task subset when you don’t need all 148--trendand--trend-windowflags for post-run analysisManifest-based task ordering (replaced numbered IDs)

CI workflow for manifest linting

Better OpenClaw transcript compatibility

Axiom observability for detailed logging of benchmark runs

Breaking changes

Task IDs now use manifest-based ordering instead of numbered prefixes

Default judge backend changed from

openclawtoapi

Get started

Visit the GitHub repo to see the tasks, run the benchmark yourself, and contribute.

The leaderboard is live with the new scoring, filtering, and model pages. If you’ve been using PinchBench results to pick your model, you now get variance, per-task breakdowns, and cost data alongside raw scores.

Full changelog: v1.2.1...v2.0.0