Trinity-Large-Thinking is Free in Kilo for a Limited Time

A stunning open reasoning model from a US-based lab

If you have been watching the OSS space, you know that the frontier is shifting from simple chat models to complex, reasoning-heavy agents. Last week, the team at Arcee AI made a massive contribution to that shift. They officially launched Trinity-Large-Thinking, a frontier open reasoning model built specifically for complex, long-horizon agents and multi-turn tool calling.

To celebrate the release of one of the strongest open models ever released outside of China, we are thrilled to announce that Trinity-Large-Thinking will be completely FREE to use in Kilo Code and KiloClaw for a full week, starting today, April 6th.

I know we’ve been launching a lot of models lately, but we’re extra excited about this powerful new release from a lesser-known US lab. It’s laser fast and great at a wide range of agentic tasks.

Here is a quick breakdown of why this model is a game-changer for your daily workflow, and why you should test drive it ASAP.

The Architecture: Massive Scale, Insane Efficiency

Usually, when you hear about a 400-billion parameter model, you immediately worry about latency. Arcee solved this through architectural constraint and innovative thinking about how to optimize every part of the inference process.

Sparse MoE Design: Trinity-Large-Thinking is a 398B-parameter sparse Mixture-of-Experts (MoE) model.

Active Parameters: During inference, it activates only about 13B parameters per token.

The Speed Advantage: Because of this extreme sparsity, it possesses the deep knowledge of a massive system but runs roughly 2 to 3 times faster than its peers on the same hardware.

The Agentic Edge: Perfect for KiloClaw

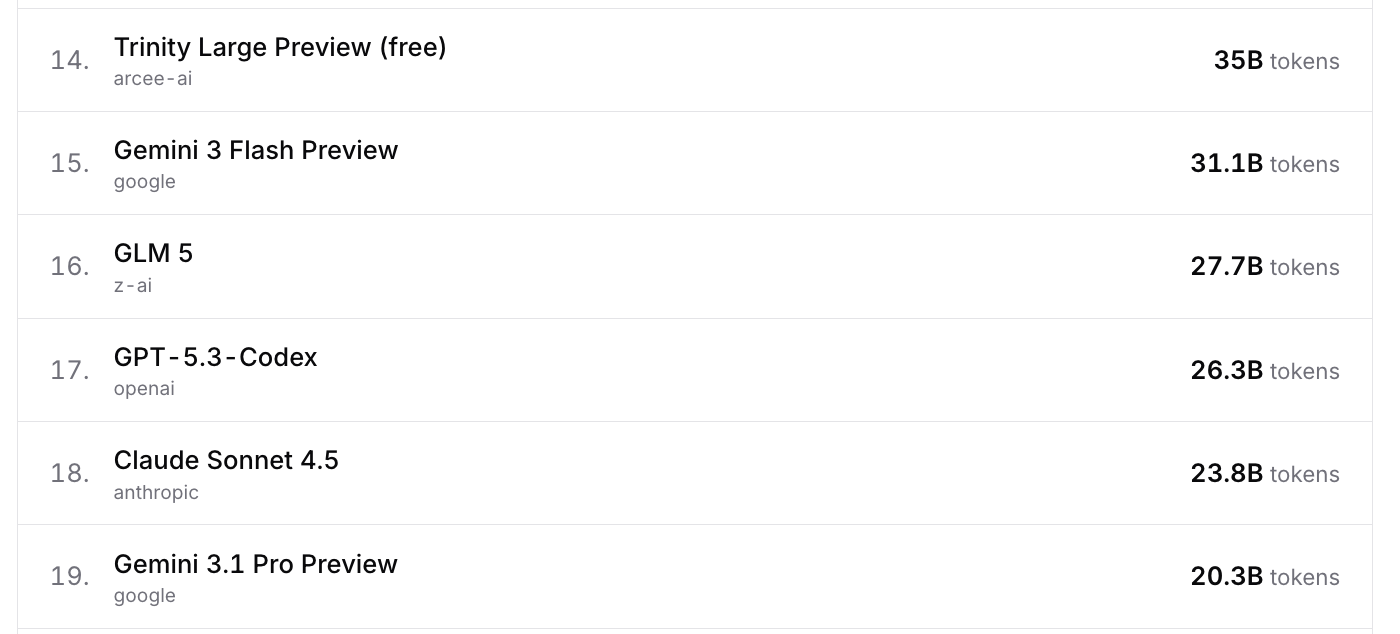

The preview release of this model, Trinity Large Preview, has been free in Kilo for over two months and quickly rose to the top of the OpenRouter leaderboards for both Kilo Code (including KiloClaw) and OpenClaw.

And that was just the preview. While Trinity Large’s architecture natively supports context windows up to 512k tokens, the Preview API served at 128k context using 8-bit quantization. Now you can use the full release for free, with a longer context that supports multiple turns.

Trinity-Large-Thinking wasn’t built to ace trivia benchmarks. It was purpose-built for tool calling, multi-step planning, and agent workflows. This makes it an absolute monster when plugged into agentic features like KiloClaw (our hosted OpenClaw environment).

Why is this new Trinity model so good for agentic use cases?

Native Reasoning Traces: The model generates explicit reasoning traces before producing its final response.

Context is Key: This internal thinking process is critical to the model’s performance. When running agentic loops in OpenClaw, these thinking tokens must be kept in context for multi-turn conversations to function correctly.

Massive Memory: To support these long reasoning chains across many agentic steps, the model boasts a longer extended context window. It’s particularly good at multi-turn tool use, context coherence, and instruction following across long-horizon agent runs

Top of the PinchBench Index

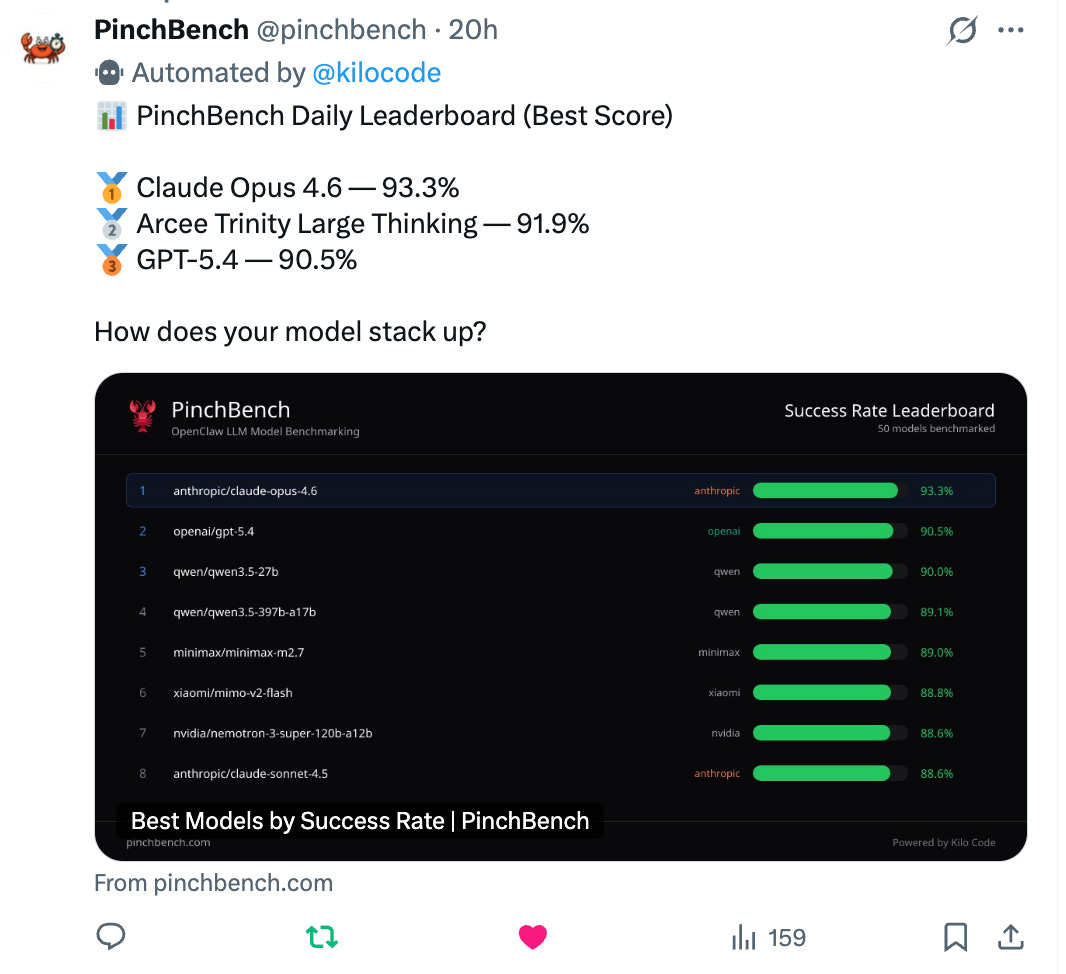

We don’t just take a lab’s word for it; we look at the data. Our internal testing has found the model strong across OpenClaw use cases in KiloClaw.

Arcee built this model focusing on the things that make agents feel real in practice: staying coherent across turns, using tools cleanly, and strictly following instructions.

The results speak for themselves:

Top-Tier Performance: Initial testing saw Trinity Large Thinking rise to #2 on PinchBench, a benchmark measuring model capability on tasks relevant to agents like OpenClaw.

The Heavyweight Challenger: It sits just behind Claude Opus-4.6 in raw agentic capability.

Unbeatable Economics: While rivaling Opus-4.6, it lands at just $0.90 per million output tokens on Arcee’s API, making it roughly 96% cheaper. (Plus it’s currently free in Kilo — that’s pretty affordable!)

At Kilo, we believe in avoiding vendor lock-in. Arcee shares that philosophy. They release model weights on Hugging Face under the Apache 2.0 license, and this has been true for all of their models. They built Trinity Large because they believe developers and enterprises need models they can inspect, post-train, host, distill, and truly own.

Try it today in our CLI, IDE extensions, and agentic features like Kilo’s Cloud Agents and KiloClaw. You’ll be glad you did.

Great to see MoE models getting better!

Anyone else finding it's timing out? I've tried it on a few tasks this morning and it's timing out a lot.