Mistral Medium 3.5 is Live in Kilo Code

The OSS lab's powerful new blended model is surprisingly affordable

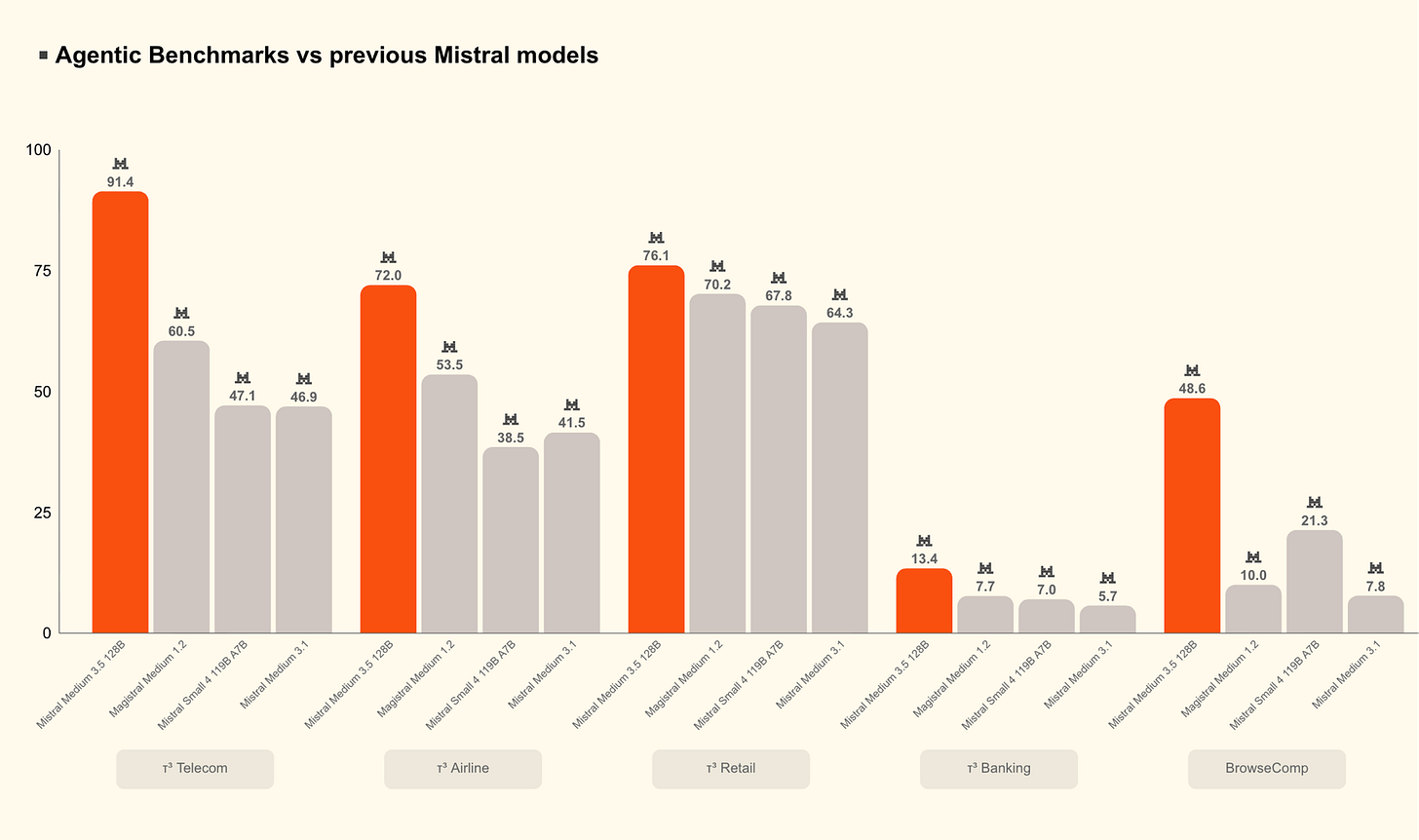

We’re thrilled to announce that the public preview version of Mistral Medium 3.5 is now live in Kilo. This is Mistral’s first blended model (it merges instruction-following, reasoning, and coding into a single 128B dense model) and it puts the lab instantly back on the OSS map.

If it’s seemed quiet on the Mistral front for a while, that’s because they’ve been heads-down building. This new model is a major leap for the lab, and the focus on agentic work—coding and agentic engineering—benefits all of us.

Mistral’s new flagship is a dense 128B model with a 256k context window, built from the ground up for long-horizon agentic work. It merges instruction-following, reasoning, and coding into a single set of weights, with configurable reasoning effort so you can dial it up for a gnarly refactor or keep it light for a quick edit. It scores 77.6% on SWE-Bench Verified, putting it ahead of Devstral 2 and models like Qwen3.5 397B A17B. The vision encoder was trained from scratch to handle variable image sizes, and the whole thing can run self-hosted on as few as four GPUs.

And Mistral is sticking to their OSS principles: the new model shipped with open weights under a modified MIT license.

This is a serious new model for serious engineering tasks, and Mistral users will find that it’s now the default for the Mistral Vibe CLI and Le Chat. And with Kilo, anybody can use the model among hundreds of other top models and always find the right tools for the job.

Use Mistral Medium 3.5 Everywhere You Use Kilo

The new model is available in the Kilo Gateway, so you can use it everywhere with a single login.

VS Code Extension

The upgraded Kilo Code VS Code extension now surfaces Mistral Medium 3.5 in the model switcher. Pick it for any task where you want a model that can hold a lot of context, reason through complexity, and produce structured output your codebase can actually consume.

Kilo Code CLI

Running Kilo from the terminal? Mistral Medium 3.5 is available there too. It’s a strong choice for longer CLI sessions — dependency upgrades, test generation, CI investigations — where you want the model working steadily without losing the thread.

Cloud Agents

Kilo Code’s cloud agent infrastructure is where Mistral Medium 3.5 really opens up. Kick off sessions powered by this model, walk away, and come back to finished branches or draft PRs. The model was built specifically for async, multi-tool work — running long stretches reliably, calling tools in sequence, producing structured handoffs. That makes it a natural fit for the tasks you want to delegate completely: module refactors, issue triage, test coverage gaps, incident investigations.

KiloClaw

Mistral Medium 3.5 is available as a model option across KiloClaw recipes. Whether you’re running a personal claw or a work claw, you can now back those workflows with a model that handles complex, multi-step reasoning without breaking a sweat.

Try It in Kilo Today

Mistral Medium 3.5 is priced at $1.50 per million input tokens and $7.50 per million output tokens through the API. For a frontier-class 128B model at this capability level, that’s competitive — especially for agentic runs that justify the context and reasoning headroom.

At a blended price of $3 per million tokens for general chat, and just $1.56 per million tokens for long-context summarization, it’s more affordable than it might look at first glance.

Plus, if you grab a Kilo Pass you can embrace a healthy discount :)

Open the model switcher in the latest version of our VS Code extension, select it in your CLI agent config, or choose it as the backing model for your next KiloClaw recipe. It’s available now in public preview — we’d love to hear what you build with it.