Enterprise AI Has a Trust Problem. We’re Hearing It Firsthand.

Here's what businesses are telling us

The last few weeks have been chaotic for anyone paying attention to the AI tooling market. Cursor is set to sell to SpaceX. Anthropic pulled the rug on subscription pricing for businesses. And in the middle of all that noise, our conversations with enterprise teams have been converging on the same frustrations.

The specifics differ by industry. The underlying problem is consistent: walled gardens and pricing uncertainty.

Their Ceiling Is Your Ceiling

Take infrastructure trust. A top-three auto manufacturer came to us because their developers were hitting Cursor rate limits and couldn’t build while they waited for them to reset. That same company had a second concern, quieter but more significant: they suspected the frontier lab powering their primary tool had oversold capacity and was running into compute headroom issues.

Whether that was probably true didn’t matter. The perception had already taken root. If your workflow depends on one lab’s availability, their ceiling is your ceiling.

Then there’s cost visibility. A Director of DevEx at one of the world’s largest banks came to us because his developers had existing model agreements with frontier labs, negotiated at the enterprise level, and he wanted them to actually use those models instead of routing everything through a middleman - which isn’t possible on vendor-locked tools. On top of that, the other tools he’d evaluated gave him no visibility into token-level costs. When you can’t see what you’re paying for, you’re trusting a vendor’s math on your own spend.

A platform engineer at one of the UK’s largest retailers had a similar frustration: his colleague was evaluating a tool with an opaque credit system and finding that developers burned through credits fast when they asked what he called “some juicy questions of the codebase.” They wanted powerful models, but they also wanted to know what those models were costing.

Routing and Compliance Shouldn’t Be Optional

For others, the issue is routing and compliance. A healthcare software CEO was simultaneously in contract negotiations with two different vendors when he reached out. He wanted to know if there was a more open alternative before he signed with either, and was already writing his own model routing layer internally (a CEO, doing infrastructure work) because “the world changes too much to bet on any one solution.”

A separate healthcare data company came to us for a specific technical reason: they work with PHI and can’t route that data through outside vendor infrastructure, but they still need frontier models for tasks that don’t touch patient data. They needed one tool that could route differently based on what was actually in the request. That’s not an unusual ask. It’s compliance.

And then there’s the on-prem and sovereignty tier. A defense contractor with CUI requirements told us that on-prem model routing wasn’t optional, it was a contractual necessity. A cloud CTO asked for mixed inference on day one, with some calls going to self-hosted models, others to their existing AWS Bedrock commitments, and the rest through our gateway, because running models is literally his business and single-vendor inference lock-in was a risk he’d already mapped out. The platform engineer at the UK retailer liked the tool he’d been using personally for 18 months, but said plainly, “obviously I can’t bring that to my work environment.” He needed enterprise data controls with his company’s own Bedrock models underneath.

The AI champion at a major fast food chain put it most directly: closed vendors are building something that looks a lot like OpenClaw but locked inside their own walled garden, and that’s precisely why model-agnostic infrastructure matters to her. The capability isn’t the moat. Who controls access to the models is.

The Data Backs This Up

We see this play out in our usage data too, and the numbers are striking. On an average day this month, Kilo users are actively running 348 different models. Yesterday, the top 10 by usage came from six different labs: MiniMax, StepFun, xAI, ByteDance, Anthropic, and NVIDIA. MiniMax was #1 by request volume. The three most popular models combined only covered half of all usage, and a full third of Kilo traffic goes to labs that most people wouldn’t have recognized 18 months ago.

Nearly half of Kilo users run models from more than one lab in a given month, and that share grew from 29% to 46% over the last six weeks. Among organizational customers specifically, 42% used models from two or more labs in a single week, generating 1.1 million requests routed to 19 different labs. The number of labs with 1,000+ weekly active users on Kilo grew from 8 in January to 12 in April.

People also aren’t just switching between projects. Yesterday, 15% of users routed to two or more models within a single hour. Power users average five labs a month. The average Kilo employee, who has every model available and no spend cap, draws from 5.7 labs per month. Even internally, with unlimited access, nobody settles on one lab. Multi-model isn’t a power-user quirk anymore. It’s becoming the default way developers work.

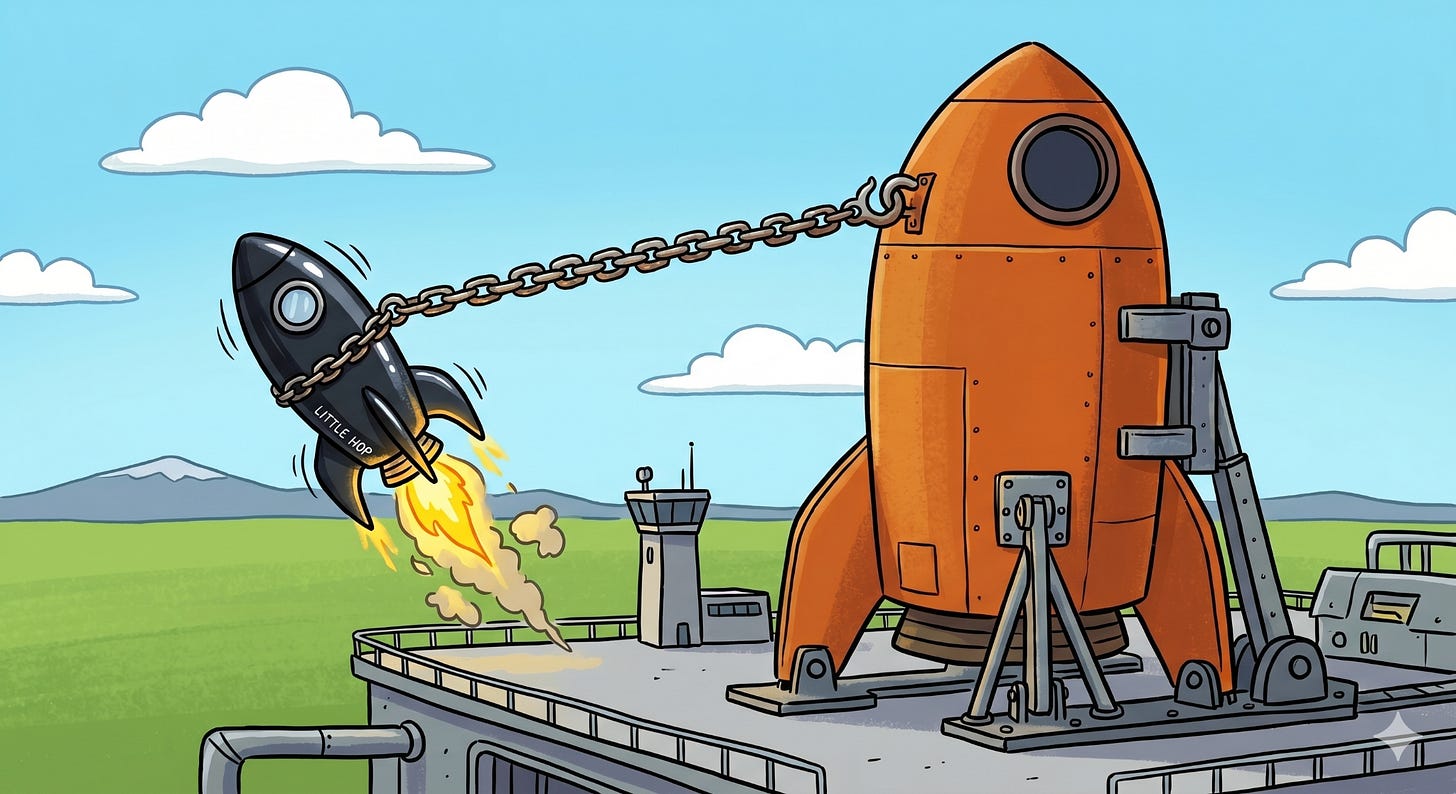

Cursor & SpaceX: The Cost of Structural Dependency

The Cursor/SpaceX deal is worth understanding through this lens. Cursor built a genuinely good product and still ended up in a position where the models at the core of their product were controlled by companies now competing directly against them. The $60 billion acquisition option and access to a million H100s is the cost of buying out of that structural dependency - training their own models so they’re not reliant on infrastructure providers who also ship competing tools. That’s not a Cursor problem. That’s just what it costs to not be dependent on your competitors.

The auto manufacturer waiting on rate limits, the bank that can’t see its token costs, the healthcare company that can’t route PHI externally, the defense contractor with on-prem requirements, the retailer who loved a tool he couldn’t bring to work. These are all expressions of the same structural problem. When you don’t own the model layer, the decisions of whoever does become your constraints.

And as frontier labs move further into tooling, the likelihood of those constraints tightening only goes up. One enterprise customer said it plainly: “I do not like vendor lock-in. All the features that these big companies are making to try and lure you in and get vendor lock-in on their flagship models is not something I’m interested in.”

He’s not alone. The market is moving toward infrastructure that stays out of the way, routing intelligently to whatever model fits the task, showing you exactly what it costs, and not requiring you to trust a vendor’s judgment about which models you should have access to. The walled garden is a bet that lock-in wins. Increasingly, the developers and enterprise teams we talk to are betting the other way.

Kilo is the all-in-one agentic engineering platform, open-source and model-agnostic. Install the VS Code extension or get started at app.kilo.ai.

You didn't even mention what is happening over at Github Copilot this week. A lot of paying customers are not amused by their latest announcement.