Kilo Bet on Cerebras 11 Months Before Wall Street Did

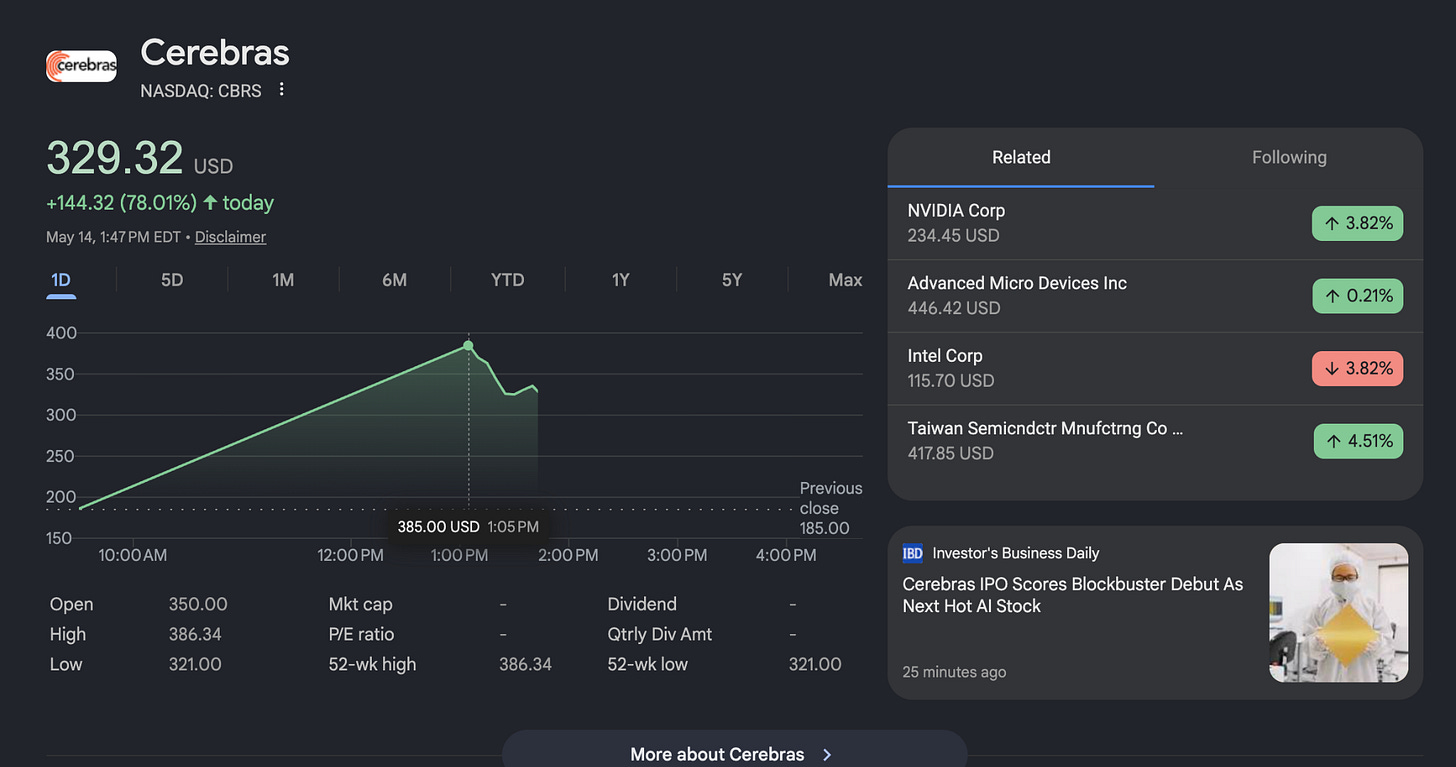

Their $48B IPO just validated the open compute thesis

Eleven months ago, Kilo shipped support for Cerebras inference as a first-class provider in the IDE extension. It was PR #777, it landed in v4.39.0, and at the time it was a quietly opinionated move.

The story everyone was telling back then was that AI coding was going to consolidate around one or two model providers and consequently, one kind of compute. Kilo was building toward an alternative assumption: that engineers would want to use a dozen different models routed to a dozen kinds of silicon, picked per task, with no one provider owning the entire stack.

This week, the market wrote a $48 billion check on that assumption.

Cerebras prices its IPO at a fully diluted valuation north of $48 billion on an order book reportedly oversubscribed 20 times. It’s the biggest US listing in close to five years. And while the financial pages will spend the rest of the week talking about wafer economics and OpenAI warrants, the more interesting story for anyone who codes for a living is what the IPO actually confirms about where AI compute is going.

The thesis under the IPO

The reason Cerebras can price like this is that AI compute is splitting into specialized lanes. Training is one job. Memory-hungry agentic work is another. Fast reasoning where someone is waiting on tokens is a third. No single chip wins all three, and engineers who can route the right task to the right silicon are going to outpace engineers locked to one stack. That’s the bet inside the $48B valuation, and it’s the same bet Kilo’s product has been organized around since day one.

Cerebras specifically owns the fast-reasoning lane. When a model fits on its wafer-scale chip, you get tokens-per-second numbers that change what coding with an AI feels like. A reasoning chain that takes a minute and a half on a GPU can finish in single-digit seconds. Anyone who has watched a model “think” for 90 seconds before answering a real question knows how big that gap is, especially when it comes to minor implementation tasks and quick revisions in a coding editor.

Why Kilo was building toward this all along

Kilo’s product is shaped by one bet repeated in every direction: openness compounds. Open pricing means you pay the provider’s actual rate with no markup. Open model selection means 500-plus models behind one dropdown, switchable mid-session. Open source means the harness your agent runs in is something you can read, fork, and improve. None of these are features bolted onto a closed product. They’re the spine.

The reason that spine matters is most obvious right now, with Cerebras pricing. If your coding agent is wired to a single provider, the heterogeneous compute future is happening to you, not for you. Every new specialized chip becomes a thing you can’t use. Every shift in which model is best at which task becomes a vendor negotiation. The whole point of an open agentic platform is that fragmentation is fuel, not friction. New silicon arrives, Kilo makes it accessible, and the engineer can move faster.

This is why Cerebras landed in Kilo eleven months ago and not last week. The same logic that said “support Cerebras early” also said support every other model worth running, build a gateway that bills at cost, keep the source open so providers can contribute back. The IPO didn’t change the strategy; it confirmed that the strategy was reading the market correctly.

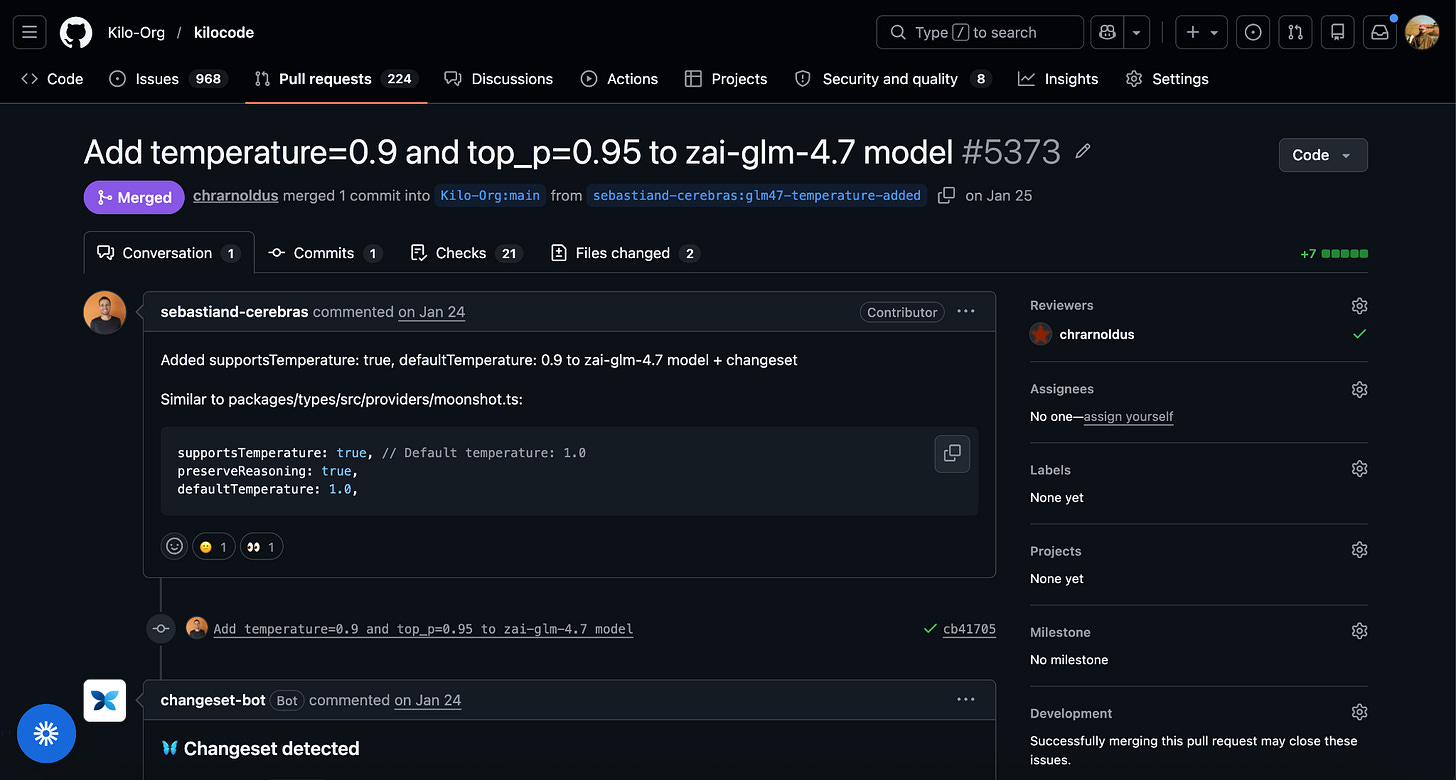

The collaboration behind the integration

It’s worth saying something about how Cerebras support in Kilo has actually stayed sharp, because it’s the part of the story that doesn’t show up in a press release.

For most of the last year, engineers from Cerebras have been contributing to the Kilo codebase directly. Not in a partnership-announcement way; in a pull-request way. Updating the model lineup as new ones launch. Tuning parameters. Wiring up integration headers. The unglamorous runtime work that determines whether a provider feels first-class or just feels supported.

A coding model is only as good as the harness behind it. Tool calls, file edits, plan steps, diff review, context management, all the parts that don’t show up in benchmarks. Cerebras putting hours into making that harness tight inside Kilo, in public, in PRs anyone can read, is what working with an open platform actually looks like. It’s why a Kilo user hitting Cerebras today is using something that’s been shaped by the people who built the chip, not just bolted on by us.

That kind of relationship only happens in the open. A closed agent forces every chip company into a vendor negotiation. An open one lets them ship code.

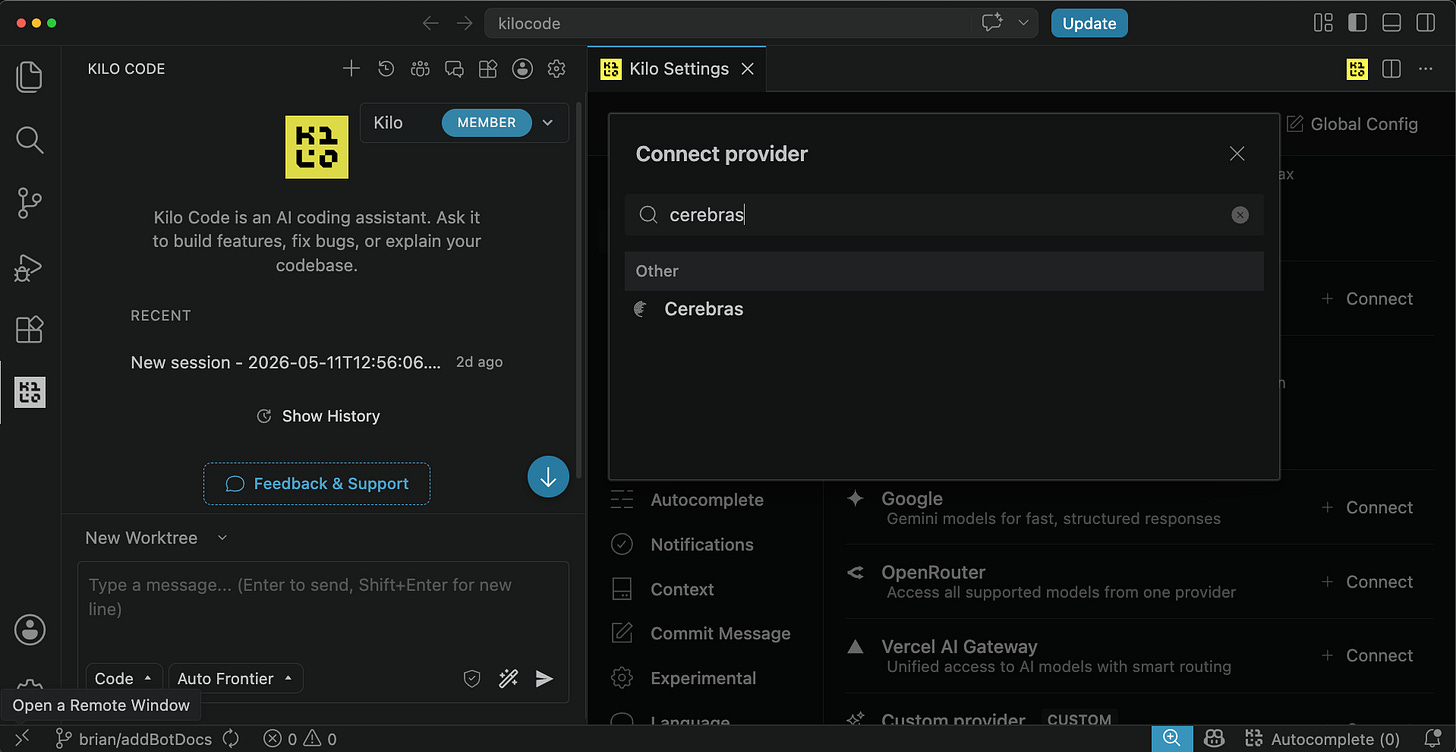

What heterogeneous compute looks like inside the editor

Here’s the version of the open compute story that actually shows up in a developer’s day. Open the provider menu in the Kilo IDE extension. Cerebras is there, next to Anthropic, OpenAI, and the 500-plus models on Kilo Gateway. Pick a wafer-scale model and have Kilo’s Plan mode plan a refactor across five files. The design comes back before you’ve finished reading the prompt you wrote.

Then, for the next task, pick something different. A Claude model for a delicate code review. A long-context model for repo-wide refactoring. Same workflow, but different chip running underneath - picked because it fits this task, not because it’s the only one available.

That’s the experience the Cerebras IPO is signaling the market wants. It’s also the experience Kilo has been building since before the IPO was on the calendar.

The market signal

Specialized inference silicon is no longer an interesting bet. It’s an obvious one. Cerebras going public at this valuation is the loudest confirmation yet that the inference market belongs to whoever can match the right workload to the right hardware. Specialized chips will keep arriving. Some will win their lanes, some won’t. The agentic platforms that thrive will be the ones engineers can trust to add the good ones quickly, route between them honestly, and never lock the choice down.

Kilo is the coding agent that has been building for that world all along. We were excited about Cerebras eleven months ago. We’ll be on whatever launches next month. The bet was always that openness wins as the compute landscape gets more interesting, and the compute landscape just got a lot more interesting.

The convenient part for anyone with an editor open: none of this is a roadmap. It’s already in the menu.

Try Kilo with Cerebras: install the VS Code or JetBrains extension or run npm install -g @kilocode/cli, then add a Cerebras key under Settings → API Provider, or use Kilo Credits at provider-rate pricing.